library(ggbiplot)

library(MASS)

library(tidyverse)

library(car)35 Discriminant analysis

Packages for this chapter:

(Note: ggbiplot loads plyr, which overlaps a lot with dplyr (filter, select etc.). We want the dplyr stuff elsewhere, so we load ggbiplot first, and the things in plyr get hidden, as shown in the Conflicts. This, despite appearances, is what we want.)

35.1 Telling whether a banknote is real or counterfeit

* A Swiss bank collected a number of known counterfeit (fake) bills over time, and sampled a number of known genuine bills of the same denomination. Is it possible to tell, from measurements taken from a bill, whether it is genuine or not? We will explore that issue here. The variables measured were:

length

right-hand width

left-hand width

top margin

bottom margin

diagonal

Read in the data from link, and check that you have 200 rows and 7 columns altogether.

Run a multivariate analysis of variance. What do you conclude? Is it worth running a discriminant analysis? (This is the same procedure as with basic MANOVAs before.)

Run a discriminant analysis. Display the output.

How many linear discriminants did you get? Is that making sense? Explain briefly.

* Using your output from the discriminant analysis, describe how each of the linear discriminants that you got is related to your original variables. (This can, maybe even should, be done crudely: “does each variable feature in each linear discriminant: yes or no?”.)

What values of your variable(s) would make

LD1large and positive?* Find the means of each variable for each group (genuine and counterfeit bills). You can get this from your fitted linear discriminant object.

Plot your linear discriminant(s), however you like. Bear in mind that there is only one linear discriminant.

What kind of score on

LD1do genuine bills typically have? What kind of score do counterfeit bills typically have? What characteristics of a bill, therefore, would you look at to determine if a bill is genuine or counterfeit?

35.2 Urine and obesity: what makes a difference?

A study was made of the characteristics of urine of young men. The men were classified into four groups based on their degree of obesity. (The groups are labelled a, b, c, d.) Four variables were measured, x (which you can ignore), pigment creatinine, chloride and chlorine. The data are in link as a .csv file. There are 45 men altogether.

Yes, you may have seen this one before. What you found was something like this, probably also with the Box M test (which has a P-value that is small, but not small enough to be a concern):

my_url <- "http://ritsokiguess.site/datafiles/urine.csv"

urine <- read_csv(my_url)Rows: 45 Columns: 5

── Column specification ────────────────────────────────────────────────────────

Delimiter: ","

chr (1): obesity

dbl (4): x, creatinine, chloride, chlorine

ℹ Use `spec()` to retrieve the full column specification for this data.

ℹ Specify the column types or set `show_col_types = FALSE` to quiet this message.response <- with(urine, cbind(creatinine, chlorine, chloride))

urine.1 <- manova(response ~ obesity, data = urine)

summary(urine.1) Df Pillai approx F num Df den Df Pr(>F)

obesity 3 0.43144 2.2956 9 123 0.02034 *

Residuals 41

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1Our aim is to understand why this result was significant.

Read in the data again (copy the code from above) and obtain a discriminant analysis.

How many linear discriminants were you expecting? Explain briefly.

Why do you think we should pay attention to the first two linear discriminants but not the third? Explain briefly.

Plot the first two linear discriminant scores (against each other), with each obesity group being a different colour.

* Looking at your plot, discuss how (if at all) the discriminants separate the obesity groups. (Where does each obesity group fall on the plot?)

* Obtain a table showing observed and predicted obesity groups. Comment on the accuracy of the predictions.

Do your conclusions from (here) and (here) appear to be consistent?

35.3 Understanding a MANOVA

One use of discriminant analysis is to understand the results of a MANOVA. This question is a followup to a previous MANOVA that we did, the one with two variables y1 and y2 and three groups a through c. The data were in link.

Read the data in again and run the MANOVA that you did before.

Run a discriminant analysis “predicting” group from the two response variables. Display the output.

* In the output from the discriminant analysis, why are there exactly two linear discriminants

LD1andLD2?* From the output, how would you say that the first linear discriminant

LD1compares in importance to the second oneLD2: much more important, more important, equally important, less important, much less important? Explain briefly.Obtain a plot of the discriminant scores.

Describe briefly how

LD1and/orLD2separate the groups. Does your picture confirm the relative importance ofLD1andLD2that you found back in part (here)? Explain briefly.What makes group

ahave a low score onLD1? There are two steps that you need to make: consider the means of groupaon variablesy1andy2and how they compare to the other groups, and consider howy1andy2play into the score onLD1.Obtain predictions for the group memberships of each observation, and make a table of the actual group memberships against the predicted ones. How many of the observations were wrongly classified?

35.4 What distinguishes people who do different jobs?

2441 people work at a certain company. They each have one of three jobs: customer service, mechanic, dispatcher. In the data set, these are labelled 1, 2 and 3 respectively. In addition, they each are rated on scales called outdoor, social and conservative. Do people with different jobs tend to have different scores on these scales, or, to put it another way, if you knew a person’s scores on outdoor, social and conservative, could you say something about what kind of job they were likely to hold? The data are in link.

Read in the data and display some of it.

Note the types of each of the variables, and create any new variables that you need to.

Run a multivariate analysis of variance to convince yourself that there are some differences in scale scores among the jobs.

Run a discriminant analysis and display the output.

Which is the more important,

LD1orLD2? How much more important? Justify your answer briefly.Describe what values for an individual on the scales will make each of

LD1andLD2high.The first group of employees, customer service, have the highest mean on

socialand the lowest mean on both of the other two scales. Would you expect the customer service employees to score high or low onLD1? What aboutLD2?Plot your discriminant scores (which you will have to obtain first), and see if you were right about the customer service employees in terms of

LD1andLD2. The job names are rather long, and there are a lot of individuals, so it is probably best to plot the scores as coloured circles with a legend saying which colour goes with which job (rather than labelling each individual with the job they have).* Obtain predicted job allocations for each individual (based on their scores on the three scales), and tabulate the true jobs against the predicted jobs. How would you describe the quality of the classification? Is that in line with what the plot would suggest?

Consider an employee with these scores: 20 on

outdoor, 17 onsocialand 8 onconservativeWhat job do you think they do, and how certain are you about that? Usepredict, first making a data frame out of the values to predict for.

35.5 Observing children with ADHD

A number of children with ADHD were observed by their mother or their father (only one parent observed each child). Each parent was asked to rate occurrences of behaviours of four different types, labelled q1 through q4 in the data set. Also recorded was the identity of the parent doing the observation for each child: 1 is father, 2 is mother.

Can we tell (without looking at the parent column) which parent is doing the observation? Research suggests that rating the degree of impairment in different categories depends on who is doing the rating: for example, mothers may feel that a child has difficulty sitting still, while fathers, who might do more observing of a child at play, might think of such a child as simply being “active” or “just being a kid”. The data are in link.

Read in the data and confirm that you have four ratings and a column labelling the parent who made each observation.

Run a suitable discriminant analysis and display the output.

Which behaviour item or items seem to be most helpful at distinguishing the parent making the observations? Explain briefly.

Obtain the predictions from the

lda, and make a suitable plot of the discriminant scores, bearing in mind that you only have oneLD. Do you think there will be any misclassifications? Explain briefly.Obtain the predicted group memberships and make a table of actual vs. predicted. Were there any misclassifications? Explain briefly.

Re-run the discriminant analysis using cross-validation, and again obtain a table of actual and predicted parents. Is the pattern of misclassification different from before? Hints: (i) Bear in mind that there is no

predictstep this time, because the cross-validation output includes predictions; (ii) use a different name for the predictions this time because we are going to do a comparison in a moment.Display the original data (that you read in from the data file) side by side with two sets of posterior probabilities: the ones that you obtained with

predictbefore, and the ones from the cross-validated analysis. Comment briefly on whether the two sets of posterior probabilities are similar. Hints: (i) usedata.framerather thancbind, for reasons that I explain elsewhere; (ii) round the posterior probabilities to 3 decimals before you display them. There are only 29 rows, so look at them all. I am going to add theLD1scores to my output and sort by that, but you don’t need to. (This is for something I am going to add later.)Row 17 of your (original) data frame above, row 5 of the output in the previous part, is the mother that was misclassified as a father. Why is it that the cross-validated posterior probabilities are 1 and 0, while the previous posterior probabilities are a bit less than 1 and a bit more than 0?

Find the parents where the cross-validated posterior probability of being a father is “non-trivial”: that is, not close to zero and not close to 1. (You will have to make a judgement about what “close to zero or 1” means for you.) What do these parents have in common, all of them or most of them?

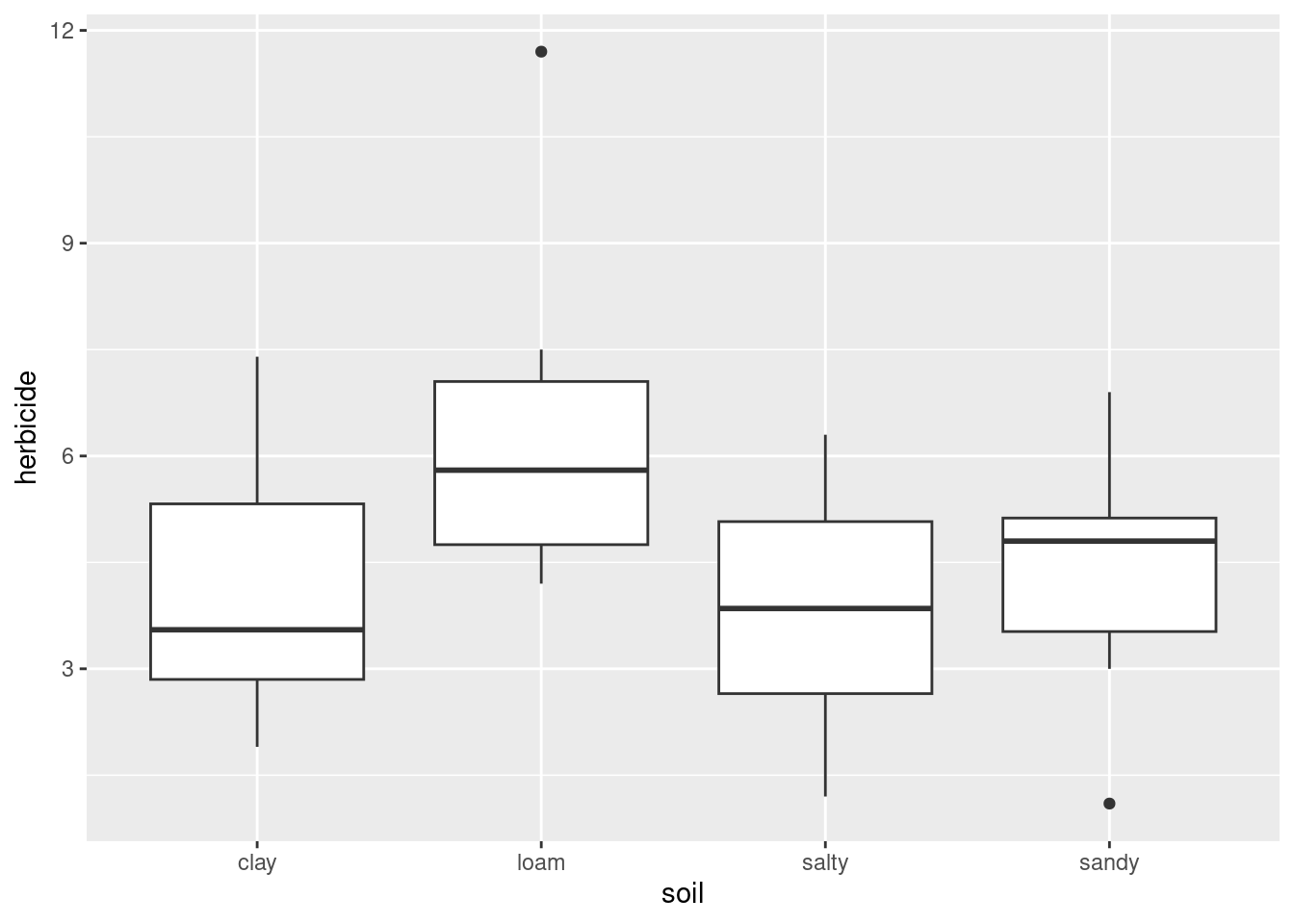

35.6 Growing corn

A new type of corn seed has been developed. The people developing it want to know if the type of soil the seed is planted in has an impact on how well the seed performs, and if so, what kind of impact. Three outcome measures were used: the yield of corn produced (from a fixed amount of seed), the amount of water needed, and the amount of herbicide needed. The data are in link. 32 fields were planted with the seed, 8 fields with each soil type.

Read in the data and verify that you have 32 observations with the correct variables.

Run a multivariate analysis of variance to see whether the type of soil has any effect on any of the variables. What do you conclude from it?

Run a discriminant analysis on these data, “predicting” soil type from the three response variables. Display the results.

* Which linear discriminants seem to be worth paying attention to? Why did you get three linear discriminants? Explain briefly.

Which response variables do the important linear discriminants depend on? Answer this by extracting something from your discriminant analysis output.

Obtain predictions for the discriminant analysis. (You don’t need to do anything with them yet.)

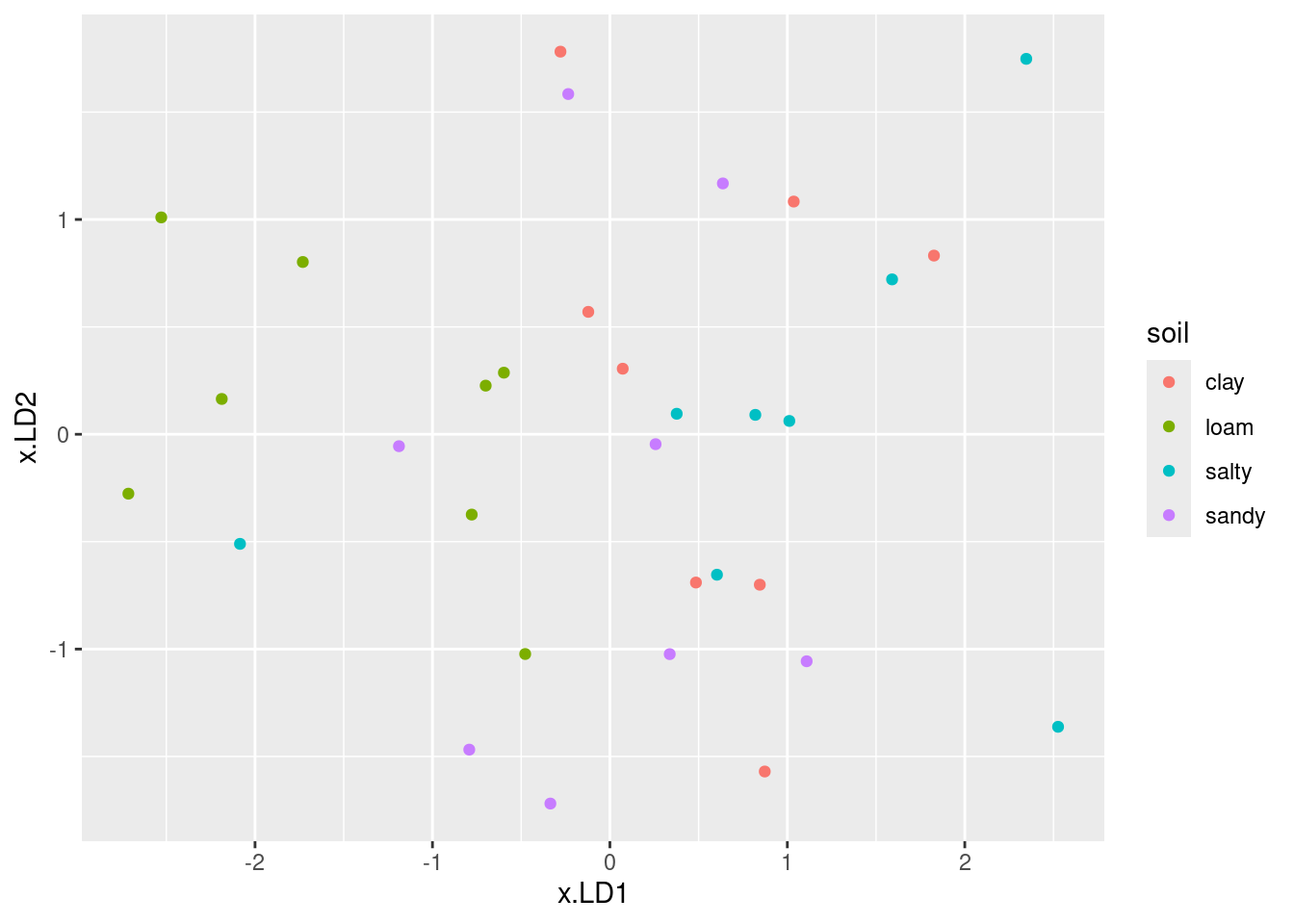

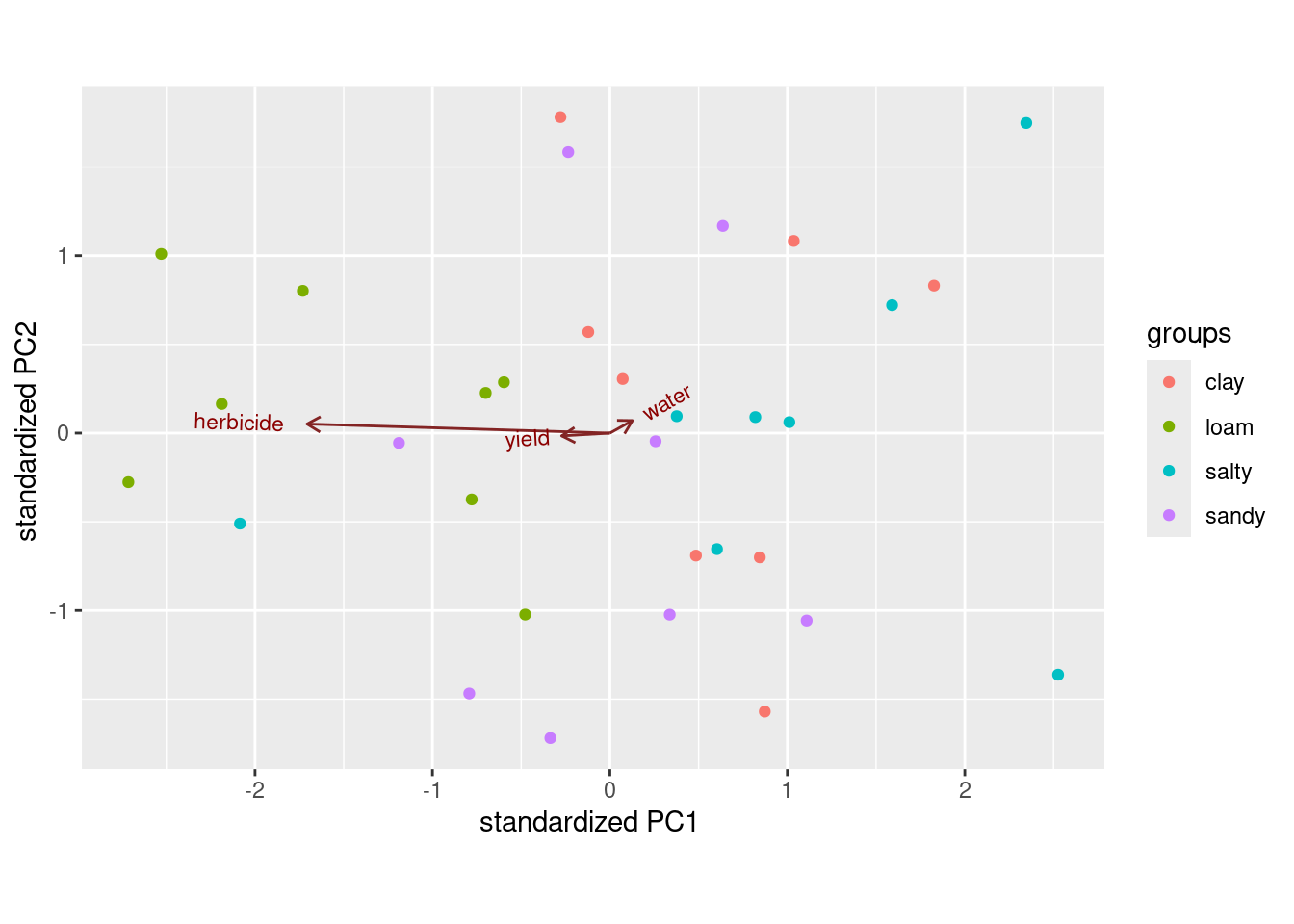

Plot the first two discriminant scores against each other, coloured by soil type. You’ll have to start by making a data frame containing what you need.

On your plot that you just made, explain briefly how

LD1distinguishes at least one of the soil types.On your plot, does

LD2appear to do anything to separate the groups? Is this surprising given your earlier findings? Explain briefly.Make a table of actual and predicted

soilgroup. Which soil type was classified correctly the most often?

35.7 Understanding athletes’ height, weight, sport and gender

On a previous assignment, we used MANOVA on the athletes data to demonstrate that there was a significant relationship between the combination of the athletes’ height and weight, with the sport they play and the athlete’s gender. The problem with MANOVA is that it doesn’t give any information about the kind of relationship. To understand that, we need to do discriminant analysis, which is the purpose of this question.

The data can be found at link.

Once again, read in and display (some of) the data, bearing in mind that the data values are separated by tabs. (This ought to be a free two marks.)

Use

uniteto make a new column in your data frame which contains the sport-gender combination. Display it. (You might like to display only a few columns so that it is clear that you did the right thing.) Hint: you’ve seenunitein the peanuts example in class.Run a discriminant analysis “predicting” sport-gender combo from height and weight. Display the results. (No comment needed yet.)

What kind of height and weight would make an athlete have a large (positive) score on

LD1? Explain briefly.Make a guess at the sport-gender combination that has the highest score on LD1. Why did you choose the combination you did?

What combination of height and weight would make an athlete have a small* (that is, very negative) score on LD2? Explain briefly.

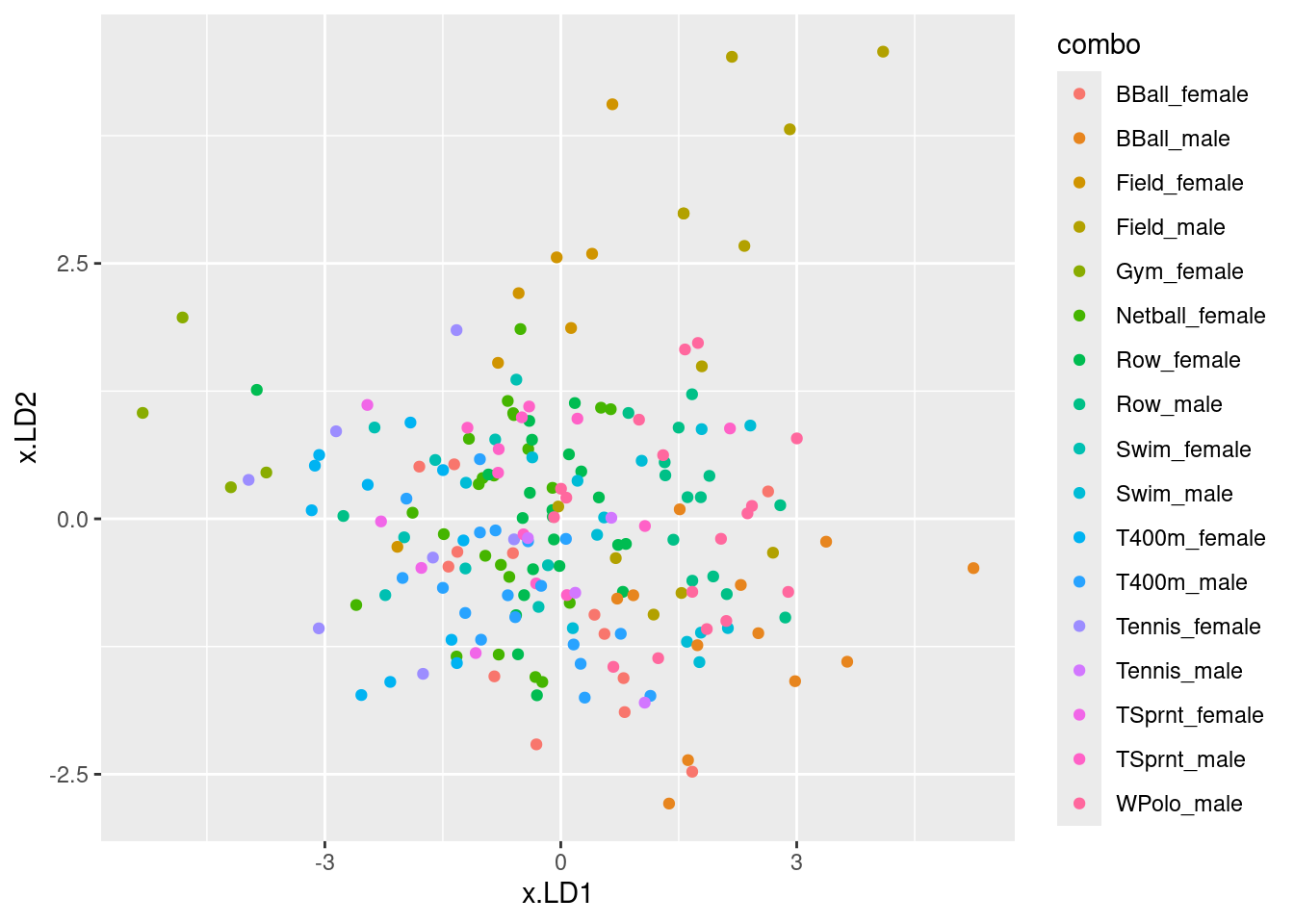

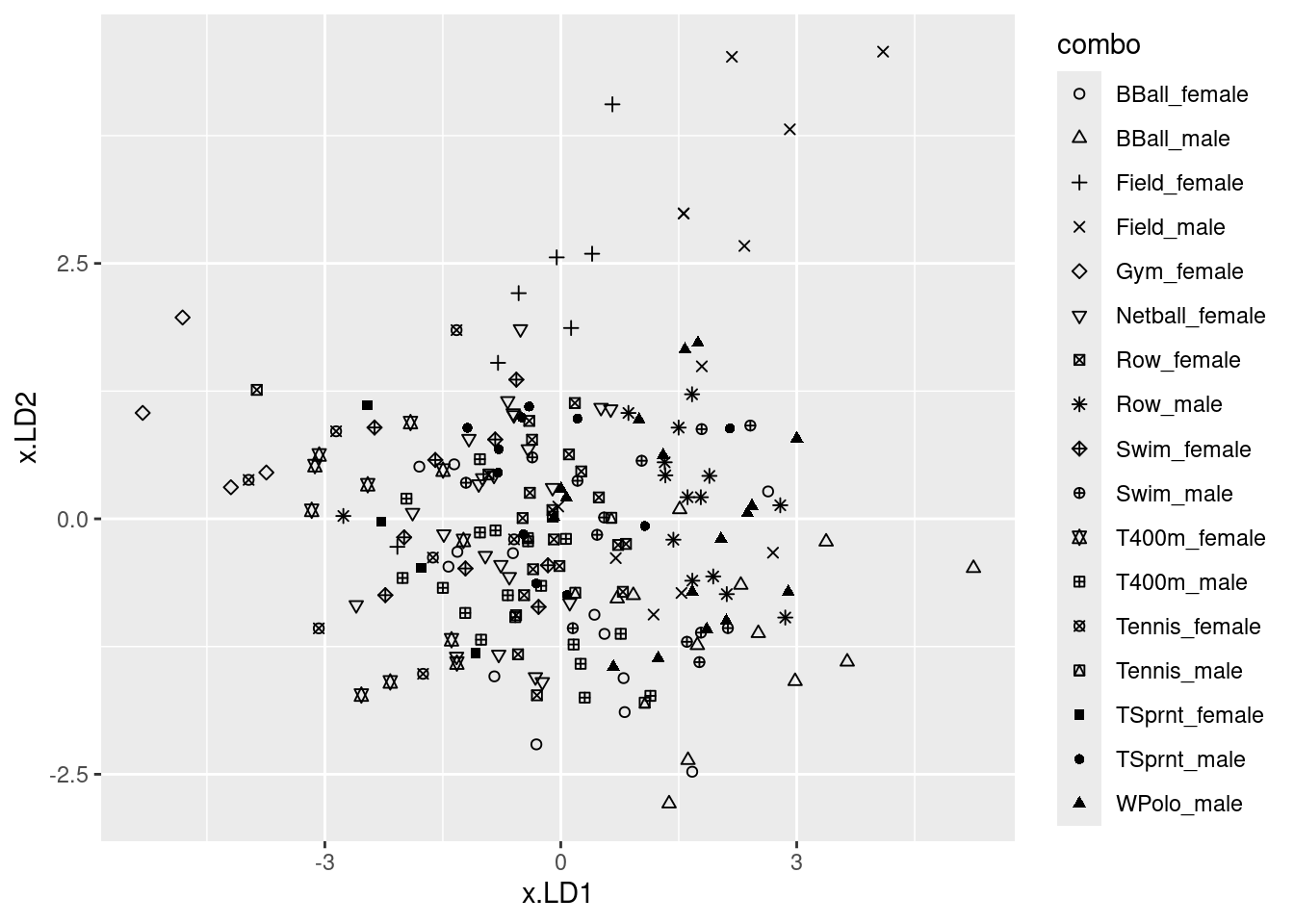

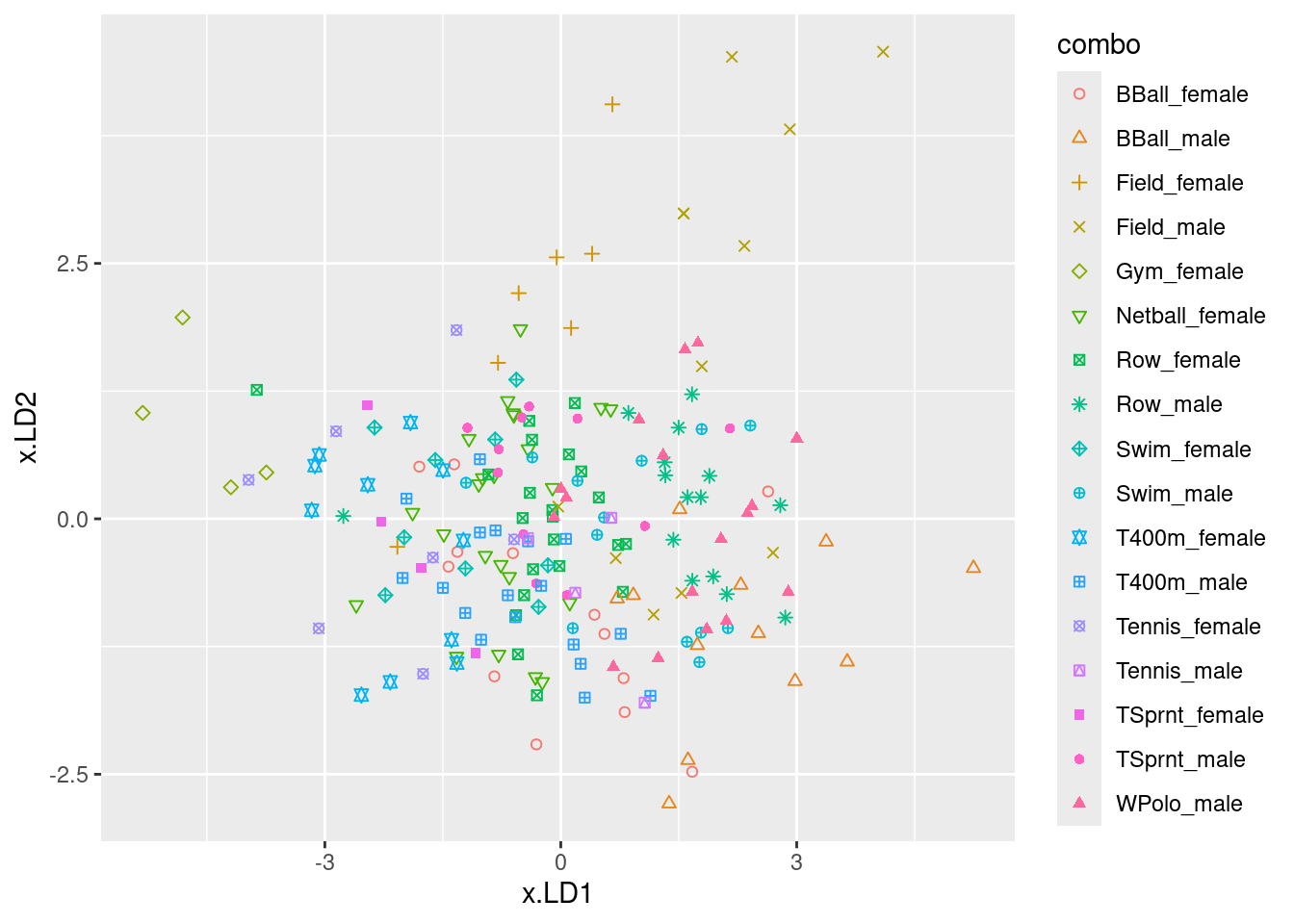

Obtain predictions for the discriminant analysis, and use these to make a plot of

LD1score againstLD2score, with the individual athletes distinguished by what sport they play and gender they are. (You can use colour to distinguish them, or you can use shapes. If you want to go the latter way, there are clues in my solutions to the MANOVA question about these athletes.)Look on your graph for the four athletes with the smallest (most negative) scores on

LD2. What do they have in common? Does this make sense, given your answer to part (here)? Explain briefly.Obtain a (very large) square table, or a (very long) table with frequencies, of actual and predicted sport-gender combinations. You will probably have to make the square table very small to fit it on the page. For that, displaying the columns in two or more sets is OK (for example, six columns and all the rows, six more columns and all the rows, then the last five columns for all the rows). Are there any sport-gender combinations that seem relatively easy to classify correctly? Explain briefly.

My solutions follow:

35.8 Telling whether a banknote is real or counterfeit

* A Swiss bank collected a number of known counterfeit (fake) bills over time, and sampled a number of known genuine bills of the same denomination. Is it possible to tell, from measurements taken from a bill, whether it is genuine or not? We will explore that issue here. The variables measured were:

length

right-hand width

left-hand width

top margin

bottom margin

diagonal

- Read in the data from link, and check that you have 200 rows and 7 columns altogether.

Solution

Check the data file first. It’s aligned in columns, thus:

my_url <- "http://ritsokiguess.site/datafiles/swiss1.txt"

swiss <- read_table(my_url)

── Column specification ────────────────────────────────────────────────────────

cols(

length = col_double(),

left = col_double(),

right = col_double(),

bottom = col_double(),

top = col_double(),

diag = col_double(),

status = col_character()

)swissYep, 200 rows and 7 columns.

\(\blacksquare\)

- Run a multivariate analysis of variance. What do you conclude? Is it worth running a discriminant analysis? (This is the same procedure as with basic MANOVAs before.)

Solution

Small-m manova will do here:

response <- with(swiss, cbind(length, left, right, bottom, top, diag))

swiss.1 <- manova(response ~ status, data = swiss)

summary(swiss.1) Df Pillai approx F num Df den Df Pr(>F)

status 1 0.92415 391.92 6 193 < 2.2e-16 ***

Residuals 198

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1summary(BoxM(response, swiss$status)) Box's M Test

Chi-Squared Value = 121.8991 , df = 21 and p-value: 3.2e-16 That is a very significant Box’s M test, which means that we shouldn’t trust the MANOVA at all. It is only the fact that the MANOVA is so significant that provides any evidence that the discriminant analysis is worth doing.

Extra: you might be wondering whether you had to go to all that trouble to make the response variable. Would this work?

response2 <- swiss %>% select(length:diag)

swiss.1a <- manova(response2 ~ status, data = swiss)Error in model.frame.default(formula = response2 ~ status, data = swiss, : invalid type (list) for variable 'response2'No, because response2 needs to be an R matrix, and it isn’t:

class(response2)[1] "tbl_df" "tbl" "data.frame"The error message was a bit cryptic (nothing unusual there), but a data frame (to R) is a special kind of list, so that R didn’t like response2 being a data frame, which it thought was a list.

This, however, works, since it turns the data frame into a matrix:

response4 <- swiss %>% select(length:diag) %>% as.matrix()

swiss.2a <- manova(response4 ~ status, data = swiss)

summary(swiss.2a) Df Pillai approx F num Df den Df Pr(>F)

status 1 0.92415 391.92 6 193 < 2.2e-16 ***

Residuals 198

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1Anyway, the conclusion: the status of a bill (genuine or counterfeit) definitely has an influence on some or all of those other variables, since the P-value \(2.2 \times 10^{-16}\) (or less) is really small. So it is apparently worth running a discriminant analysis to figure out where the differences lie.

As a piece of strategy, for creating the response matrix, you can always either use cbind, which creates a matrix directly, or you can use select, which is often easier but creates a data frame, and then turn that into a matrix using as.matrix. As long as you end up with a matrix, it’s all good.

\(\blacksquare\)

- Run a discriminant analysis. Display the output.

Solution

Now we forget about all that response stuff. For a discriminant analysis, the grouping variable (or combination of the grouping variables) is the “response”, and the quantitative ones are “explanatory”:

swiss.3 <- lda(status ~ length + left + right + bottom + top + diag, data = swiss)

swiss.3Call:

lda(status ~ length + left + right + bottom + top + diag, data = swiss)

Prior probabilities of groups:

counterfeit genuine

0.5 0.5

Group means:

length left right bottom top diag

counterfeit 214.823 130.300 130.193 10.530 11.133 139.450

genuine 214.969 129.943 129.720 8.305 10.168 141.517

Coefficients of linear discriminants:

LD1

length 0.005011113

left 0.832432523

right -0.848993093

bottom -1.117335597

top -1.178884468

diag 1.556520967\(\blacksquare\)

- How many linear discriminants did you get? Is that making sense? Explain briefly.

Solution

I got one discriminant, which makes sense because there are two groups, and the smaller of 6 (variables, not counting the grouping one) and \(2-1\) is 1.

\(\blacksquare\)

- * Using your output from the discriminant analysis, describe how each of the linear discriminants that you got is related to your original variables. (This can, maybe even should, be done crudely: “does each variable feature in each linear discriminant: yes or no?”.)

Solution

This is the Coefficients of Linear Discriminants. Make a call about whether each of those coefficients is close to zero (small in size compared to the others), or definitely positive or definitely negative. These are judgement calls: either you can say that LD1 depends mainly on diag (treating the other coefficients as “small” or close to zero), or you can say that LD1 depends on everything except length.

\(\blacksquare\)

- What values of your variable(s) would make

LD1large and positive?

Solution

Depending on your answer to the previous part: If you said that only diag was important, diag being large would make LD1 large and positive. If you said that everything but length was important, then it’s a bit more complicated: left and diag large, right, bottom and top small (since their coefficients are negative).

\(\blacksquare\)

- * Find the means of each variable for each group (genuine and counterfeit bills). You can get this from your fitted linear discriminant object.

Solution

swiss.3$means length left right bottom top diag

counterfeit 214.823 130.300 130.193 10.530 11.133 139.450

genuine 214.969 129.943 129.720 8.305 10.168 141.517\(\blacksquare\)

- Plot your linear discriminant(s), however you like. Bear in mind that there is only one linear discriminant.

Solution

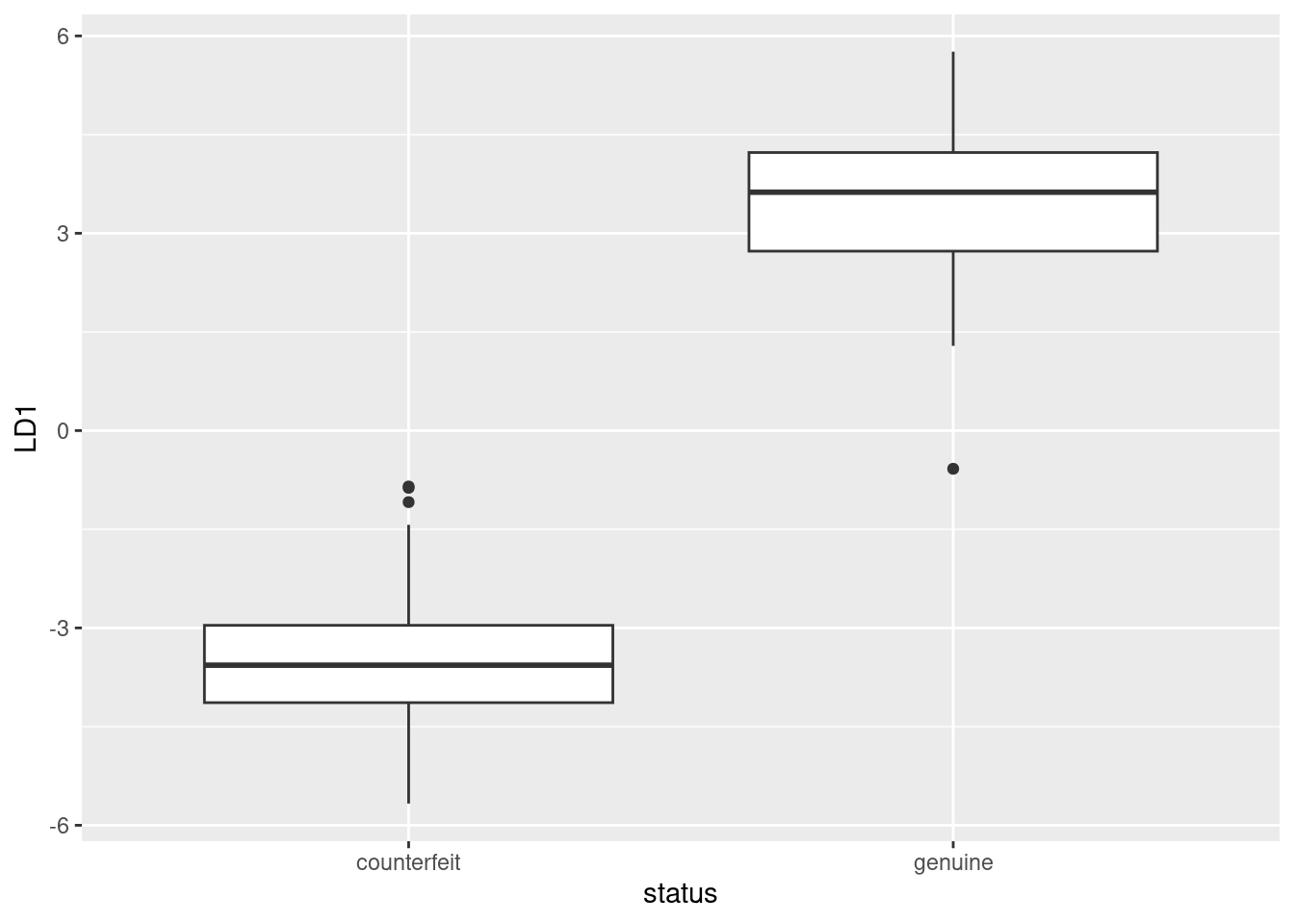

With only one linear discriminant, we can plot LD1 scores on the \(y\)-axis and the grouping variable on the \(x\)-axis. How you do that is up to you.

Before we start, though, we need the LD1 scores. This means doing predictions. The discriminant scores are in there. We take the prediction output and make a data frame with all the things in the original data. My current preference (it changes) is to store the predictions, and then cbind them with the original data, thus:

swiss.pred <- predict(swiss.3)

d <- cbind(swiss, swiss.pred)

head(d)I needed head because cbind makes an old-fashioned data.frame rather than a tibble, so if you display it, you get all of it.

This gives the LD1 scores, predicted groups, and posterior probabilities as well. That saves us having to pick out the other things later. The obvious thing is a boxplot. By examining d above (didn’t you?), you saw that the LD scores were in a column called LD1:

ggplot(d, aes(x = status, y = LD1)) + geom_boxplot()

This shows that positive LD1 scores go (almost without exception) with genuine bills, and negative ones with counterfeit bills. It also shows that there are three outlier bills, two counterfeit ones with unusually high LD1 score, and one genuine one with unusually low LD1 score, at least for a genuine bill.

This goes to show that (the Box M test notwithstanding) the two types of bill really are different in a way that is worth investigating.

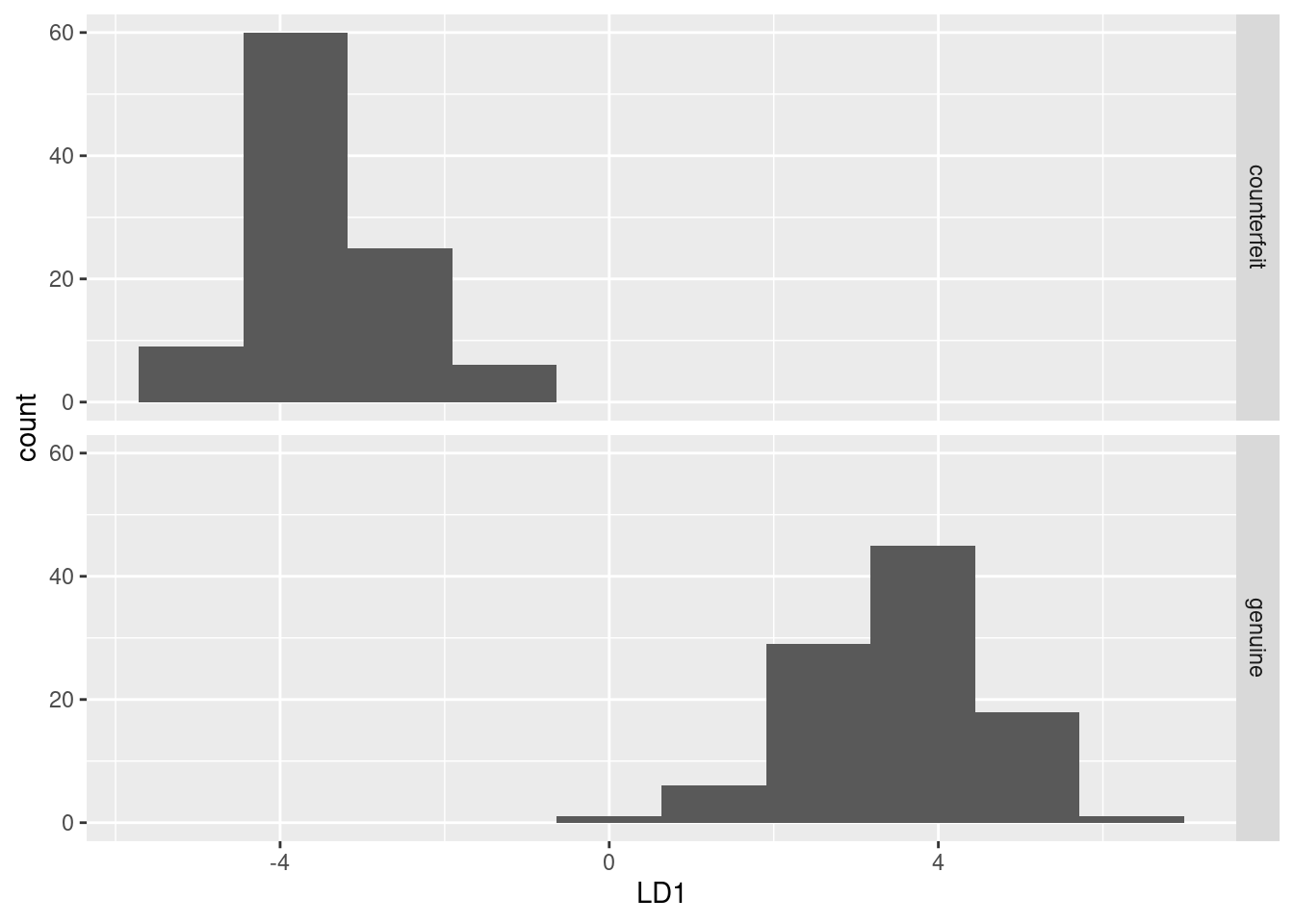

Or you could do faceted histograms of LD1 by status:

ggplot(d, aes(x = LD1)) + geom_histogram(bins = 10) + facet_grid(status ~ .)

This shows much the same thing as plot(swiss.3) does (try it).

\(\blacksquare\)

- What kind of score on

LD1do genuine bills typically have? What kind of score do counterfeit bills typically have? What characteristics of a bill, therefore, would you look at to determine if a bill is genuine or counterfeit?

Solution

The genuine bills almost all have a positive score on LD1, while the counterfeit ones all have a negative one. This means that the genuine bills (depending on your answer to (here)) have a large diag, or they have a large left and diag, and a small right, bottom and top. If you look at your table of means in (here), you’ll see that the genuine bills do indeed have a large diag, or, depending on your earlier answer, a small right, bottom and top, but not actually a small left (the left values are very close for the genuine and counterfeit coins).

Extra: as to that last point, this is easy enough to think about. A boxplot seems a nice way to display it:

ggplot(d, aes(y = left, x = status)) + geom_boxplot()

There is a fair bit of overlap: the median is higher for the counterfeit bills, but the highest value actually belongs to a genuine one.

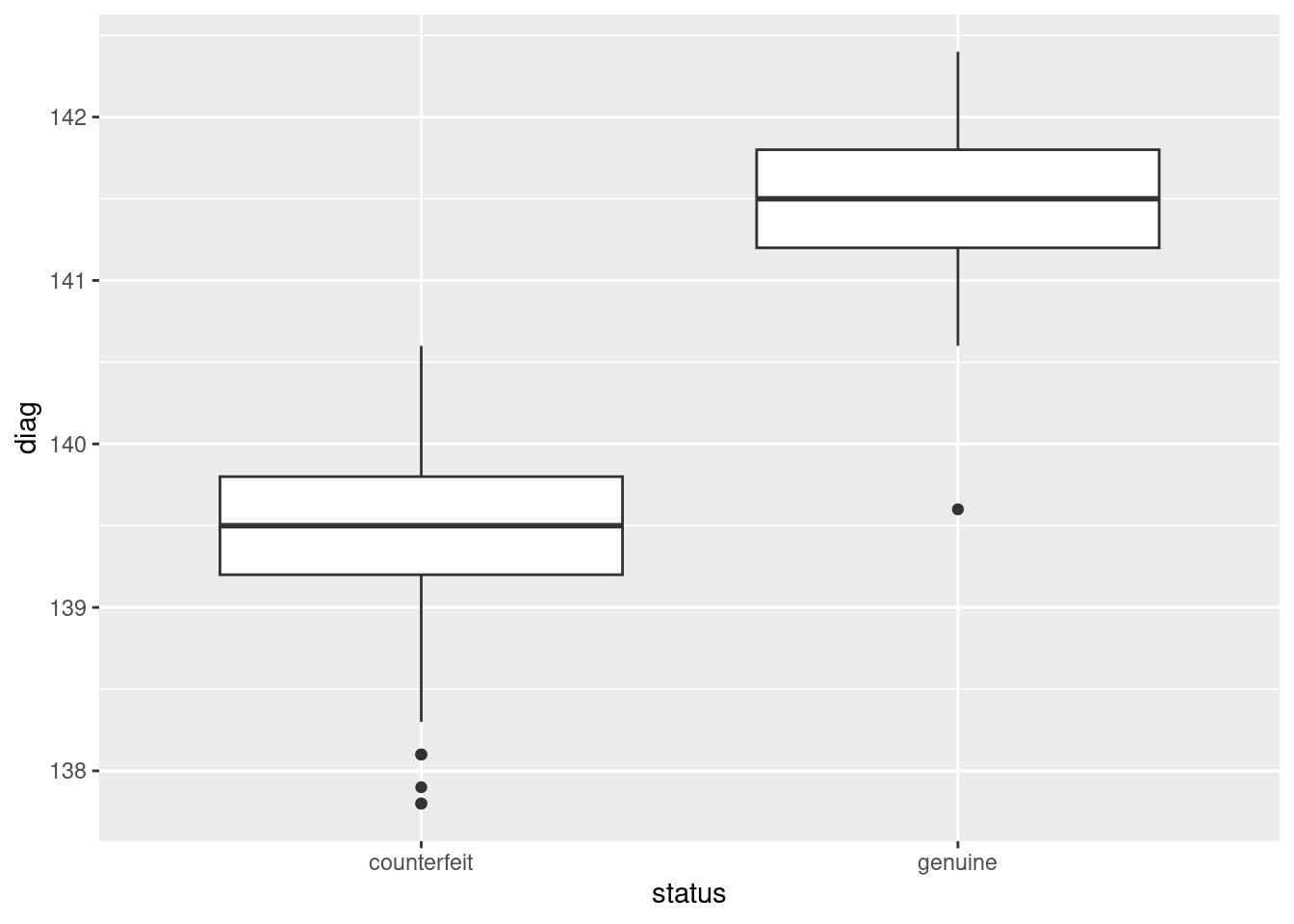

Compare that to diag:

ggplot(d, aes(y = diag, x = status)) + geom_boxplot()

Here, there is an almost complete separation of the genuine and counterfeit bills, with just one low outlier amongst the genuine bills spoiling the pattern. I didn’t look at the predictions (beyond the discriminant scores), since this question (as set on an assignment a couple of years ago) was already too long, but there is no difficulty in doing so. Everything is in the data frame I called d:

with(d, table(obs = status, pred = class)) pred

obs counterfeit genuine

counterfeit 100 0

genuine 1 99(this labels the rows and columns, which is not necessary but is nice.)

The tidyverse way is to make a data frame out of the actual and predicted statuses, and then count what’s in there:

d %>% count(status, class)This gives a “long” table, with frequencies for each of the combinations for which anything was observed.

Frequency tables are usually wide, and we can make this one so by pivot-wider-ing pred:

d %>%

count(status, class) %>%

pivot_wider(names_from = class, values_from = n)One of the genuine bills is incorrectly classified as a counterfeit one (evidently that low outlier on LD1), but every single one of the counterfeit bills is classified correctly. That missing value is actually a frequency that is zero, which you can fix up thus:

d %>%

count(status, class) %>%

pivot_wider(names_from = class, values_from = n, values_fill = 0) which turns any missing values into the zeroes they should be in this kind of problem. It would be interesting to see what the posterior probabilities look like for that misclassified bill:

d %>% filter(status != class)On the basis of the six measured variables, this looks a lot more like a counterfeit bill than a genuine one. Are there any other bills where there is any doubt? One way to find out is to find the maximum of the two posterior probabilities. If this is small, there is some doubt about whether the bill is real or fake. 0.99 seems like a very stringent cutoff, but let’s try it and see:

d %>%

mutate(max.post = pmax(posterior.counterfeit, posterior.genuine)) %>%

filter(max.post < 0.99) %>%

dplyr::select(-c(length:diag))The only one is the bill that was misclassified: it was actually genuine, but was classified as counterfeit. The posterior probabilities say that it was pretty unlikely to be genuine, but it was the only bill for which there was any noticeable doubt at all.

I had to use pmax rather than max there, because I wanted max.post to contain the larger of the two corresponding entries: that is, the first entry in max.post is the larger of the first entry of counterfeit and the first entry in genuine. If I used max instead, I’d get the largest of all the entries in counterfeit and all the entries in genuine, repeated 200 times. (Try it and see.) pmax stands for “parallel maximum”, that is, for each row separately. This also should work:

d %>%

rowwise() %>%

mutate(max.post = max(posterior.counterfeit, posterior.genuine)) %>%

filter(max.post < 0.99) %>%

select(-c(length:diag))Because we’re using rowwise, max is applied to the pairs of values of posterior.counterfeit and posterior.genuine, taken one row at a time.

\(\blacksquare\)

35.9 Urine and obesity: what makes a difference?

A study was made of the characteristics of urine of young men. The men were classified into four groups based on their degree of obesity. (The groups are labelled a, b, c, d.) Four variables were measured, x (which you can ignore), pigment creatinine, chloride and chlorine. The data are in link as a .csv file. There are 45 men altogether.

Yes, you may have seen this one before. What you found was something like this:

my_url <- "http://ritsokiguess.site/datafiles/urine.csv"

urine <- read_csv(my_url)Rows: 45 Columns: 5

── Column specification ────────────────────────────────────────────────────────

Delimiter: ","

chr (1): obesity

dbl (4): x, creatinine, chloride, chlorine

ℹ Use `spec()` to retrieve the full column specification for this data.

ℹ Specify the column types or set `show_col_types = FALSE` to quiet this message.response <- with(urine, cbind(creatinine, chlorine, chloride))

urine.1 <- manova(response ~ obesity, data = urine)

summary(urine.1) Df Pillai approx F num Df den Df Pr(>F)

obesity 3 0.43144 2.2956 9 123 0.02034 *

Residuals 41

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1summary(BoxM(response, urine$obesity)) Box's M Test

Chi-Squared Value = 30.8322 , df = 18 and p-value: 0.0301 Our aim is to understand why this result was significant. (Remember that the P-value on Box’s M test is not small enough to be worried about.)

- Read in the data again (copy the code from above) and obtain a discriminant analysis.

Solution

As above, plus:

urine.1 <- lda(obesity ~ creatinine + chlorine + chloride, data = urine)

urine.1Call:

lda(obesity ~ creatinine + chlorine + chloride, data = urine)

Prior probabilities of groups:

a b c d

0.2666667 0.3111111 0.2444444 0.1777778

Group means:

creatinine chlorine chloride

a 15.89167 5.275000 6.012500

b 17.82143 7.450000 5.214286

c 16.34545 8.272727 5.372727

d 11.91250 9.675000 3.981250

Coefficients of linear discriminants:

LD1 LD2 LD3

creatinine 0.24429462 -0.1700525 -0.02623962

chlorine -0.02167823 -0.1353051 0.11524045

chloride 0.23805588 0.3590364 0.30564592

Proportion of trace:

LD1 LD2 LD3

0.7476 0.2430 0.0093 \(\blacksquare\)

- How many linear discriminants were you expecting? Explain briefly.

Solution

There are 3 variables and 4 groups, so the smaller of 3 and \(4-1=3\): that is, 3.

\(\blacksquare\)

- Why do you think we should pay attention to the first two linear discriminants but not the third? Explain briefly.

Solution

The first two “proportion of trace” values are a lot bigger than the third (or, the third one is close to 0).

\(\blacksquare\)

- Plot the first two linear discriminant scores (against each other), with each obesity group being a different colour.

Solution

First obtain the predictions, and then make a data frame out of the original data and the predictions.

urine.pred <- predict(urine.1)

d <- cbind(urine, urine.pred)

head(d)urine produced the first five columns and urine.pred produced the rest.

To go a more tidyverse way, we can combine the original data frame and the predictions using bind_cols, but we have to be more careful that the things we are gluing together are both data frames:

class(urine)[1] "spec_tbl_df" "tbl_df" "tbl" "data.frame" class(urine.pred)[1] "list"urine is a tibble all right, but urine.pred is a list. What does it look like?

glimpse(urine.pred)List of 3

$ class : Factor w/ 4 levels "a","b","c","d": 2 1 2 2 1 1 3 3 1 1 ...

$ posterior: num [1:45, 1:4] 0.233 0.36 0.227 0.294 0.477 ...

..- attr(*, "dimnames")=List of 2

.. ..$ : chr [1:45] "1" "2" "3" "4" ...

.. ..$ : chr [1:4] "a" "b" "c" "d"

$ x : num [1:45, 1:3] 0.393 -0.482 0.975 2.188 2.018 ...

..- attr(*, "dimnames")=List of 2

.. ..$ : chr [1:45] "1" "2" "3" "4" ...

.. ..$ : chr [1:3] "LD1" "LD2" "LD3"A data frame is a list for which all the items are the same length, but some of the things in here are matrices. You can tell because they have a number of rows, 45, and a number of columns, 3 or 4. They do have the right number of rows, though, so something like as.data.frame (a base R function) will smoosh them all into one data frame, grabbing the columns from the matrices:

head(as.data.frame(urine.pred))You see that the columns that came from matrices have gained two-part names, the first part from the name of the matrix, the second part from the column name within that matrix. Then we can do this:

dd <- bind_cols(urine, as.data.frame(urine.pred))

ddIf you want to avoid base R altogether, though, and go straight to bind_cols, you have to be more careful about the types of things. bind_cols only works with vectors and data frames, not matrices, so that is what it is up to you to make sure you have. That means pulling out the pieces, turning them from matrices into data frames, and then gluing everything back together:

post <- as_tibble(urine.pred$posterior)

ld <- as_tibble(urine.pred$x)

ddd <- bind_cols(urine, class = urine.pred$class, ld, post)

dddThat’s a lot of work, but you might say that it’s worth it because you are now absolutely sure what kind of thing everything is. I also had to be slightly careful with the vector of class values; in ddd it has to have a name, so I have to make sure I give it one.2 Any of these ways (in general) is good. The last way is a more careful approach, since you are making sure things are of the right type rather than relying on R to convert them for you, but I don’t mind which way you go. Now make the plot, making sure that you are using columns with the right names. I’m using my first data frame, with the two-part names:

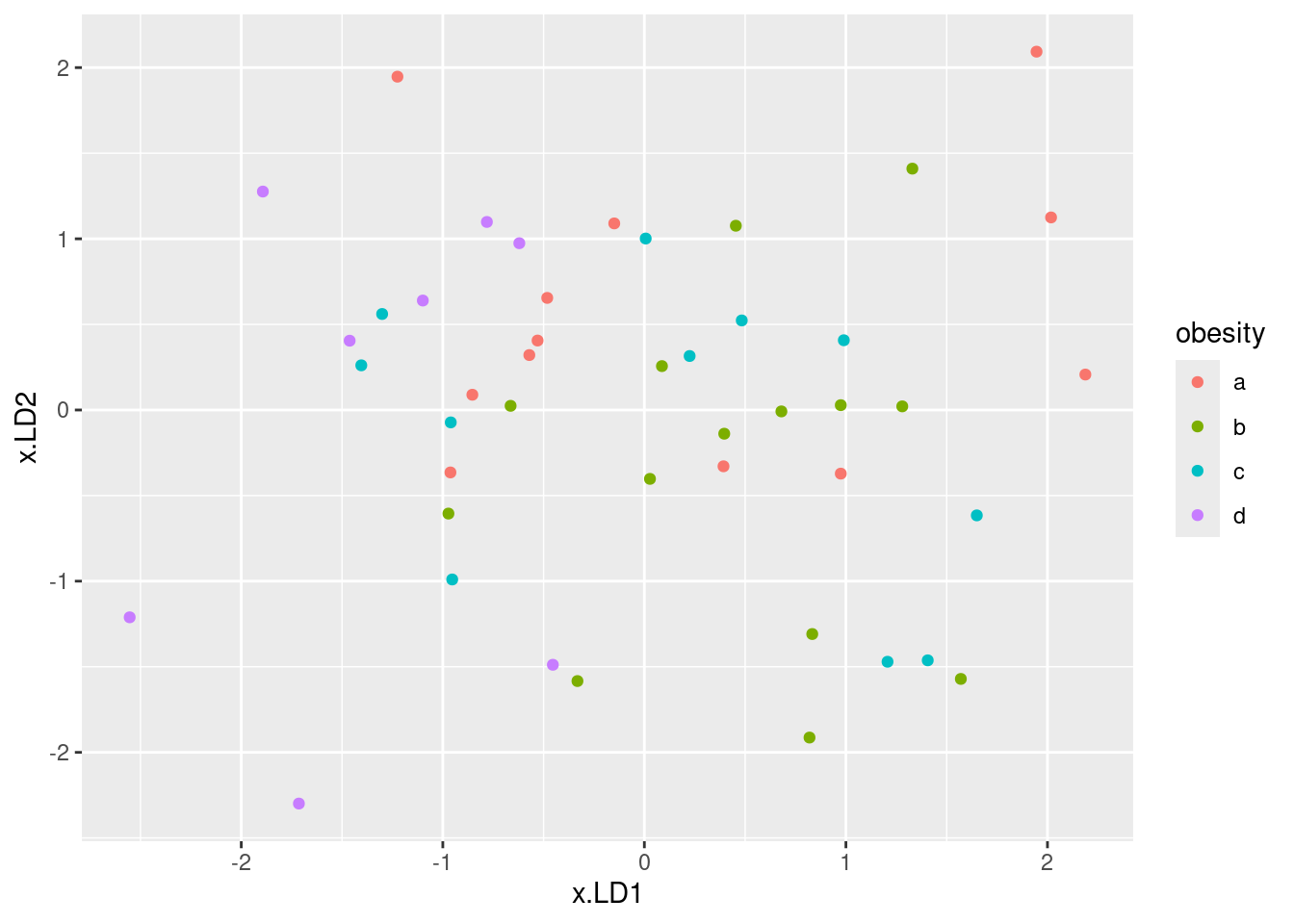

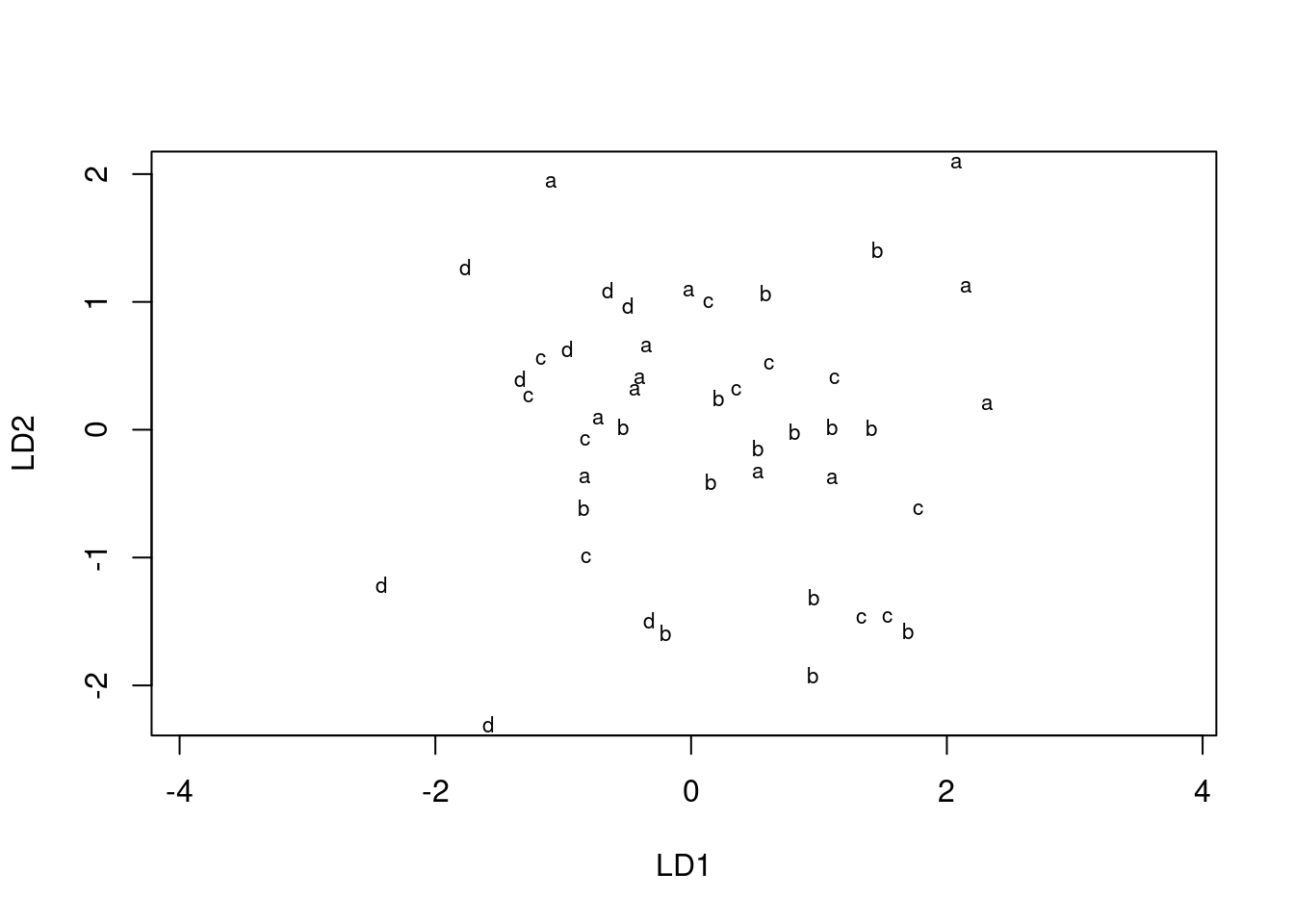

ggplot(d, aes(x = x.LD1, y = x.LD2, colour = obesity)) + geom_point()

\(\blacksquare\)

- * Looking at your plot, discuss how (if at all) the discriminants separate the obesity groups. (Where does each obesity group fall on the plot?)

Solution

My immediate reaction was “they don’t much”. If you look a bit more closely, the b group, in green, is on the right (high LD1) and the d group (purple) is on the left (low LD1). The a group, red, is mostly at the top (high LD2) but the c group, blue, really is all over the place.

The way to tackle interpreting a plot like this is to look for each group individually and see if that group is only or mainly found on a certain part of the plot.

This can be rationalized by looking at the “coefficients of linear discriminants” on the output. LD1 is low if creatinine and chloride are low (it has nothing much to do with chlorine since that coefficient is near zero). Group d is lowest on both creatinine and chloride, so that will be lowest on LD1. LD2 is high if chloride is high, or creatinine and chlorine are low. Out of the groups a, b, c, a has the highest mean on chloride and lowest means on the other two variables, so this should be highest on LD2 and (usually) is.

Looking at the means is only part of the story; if the individuals within a group are very variable, as they are here (especially group c), then that group will appear all over the plot. The table of means only says how the average individual within a group stacks up.

ggbiplot(urine.1, groups = urine$obesity)

This shows (in a way that is perhaps easier to see) how the linear discriminants are related to the original variables, and thus how the groups differ in terms of the original variables.3 Most of the B’s are high creatinine and high chloride (on the right); most of the D’s are low on both (on the left). LD2 has a bit of chloride, but not much of anything else. Extra: the way we used to do this was with “base graphics”, which involved plotting the lda output itself:

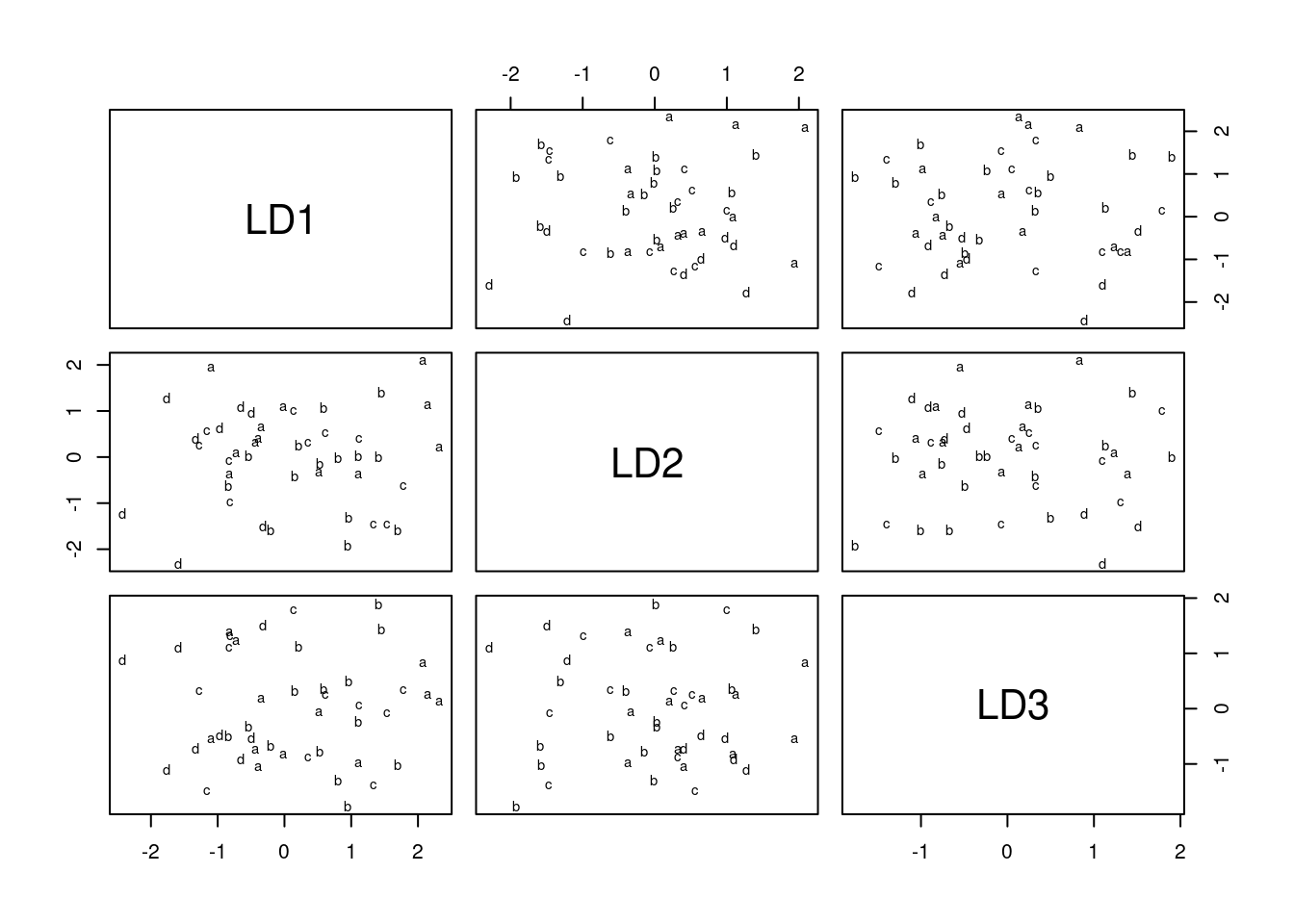

plot(urine.1)

which is a plot of each discriminant score against each other one. You can plot just the first two, like this:

plot(urine.1, dimen = 2)

This is easier than using ggplot, but (i) less flexible and (ii) you have to figure out how it works rather than doing things the standard ggplot way. So I went with constructing a data frame from the predictions, and then ggplotting that. It’s a matter of taste which way is better.

\(\blacksquare\)

- * Obtain a table showing observed and predicted obesity groups. Comment on the accuracy of the predictions.

Solution

Make a table, one way or another:

tab <- with(d, table(obesity, class))

tab class

obesity a b c d

a 7 3 2 0

b 2 9 2 1

c 3 4 1 3

d 2 0 1 5class is always the predicted group in these. You can also name things in table. Or, if you prefer (equally good), the tidyverse way of counting all the combinations of true obesity and predicted class, which can be done all in one go, or in two steps by saving the data frame first. I’m saving my results for later:

d %>% count(obesity, class) -> tab

tabor if you prefer to make it look more like a table of frequencies:

tab %>% pivot_wider(names_from=class, values_from=n, values_fill = list(n=0))The thing on the end fills in zero frequencies as such (they would otherwise be NA, which they are not: we know they are zero). My immediate reaction to this is “it’s terrible”! But at least some of the men have their obesity group correctly predicted: 7 of the \(7+3+2+0=12\) men that are actually in group a are predicted to be in a; 9 of the 14 actual b’s are predicted to be b’s; 5 of the 8 actual d’s are predicted to be d’s. These are not so awful. But only 1 of the 11 c’s is correctly predicted to be a c!

As for what I want to see: I am looking for some kind of statement about how good you think the predictions are (the word “terrible” is fine for this) with some kind of support for your statement. For example, “the predictions are not that good, but at least group B is predicted with some accuracy (9 out of 14).”

I think looking at how well the individual groups were predicted is the most incisive way of getting at this, because the c men are the hardest to get right and the others are easier, but you could also think about an overall misclassification rate. This comes most easily from the “tidy” table:

tab %>% count(correct = (obesity == class), wt = n)You can count anything, not just columns that already exist. This one is a kind of combined mutate-and-count to create the (logical) column called correct.

It’s a shortcut for this:

tab %>%

mutate(is_correct = (obesity == class)) %>%

count(is_correct, wt = n)If I don’t put the wt, count counts the number of rows for which the true and predicted obesity group is the same. But that’s not what I want here: I want the number of observations totalled up, which is what the wt= does. It says “use the things in the given column as weights”, which means to total them up rather than count up the number of rows.

This says that 22 men were classified correctly and 23 were gotten wrong. We can find the proportions correct and wrong:

tab %>%

count(correct = (obesity == class), wt = n) %>%

mutate(proportion = n / sum(n))and we see that 51% of men had their obesity group predicted wrongly. This is the overall misclassification rate, which is a simple summary of how good a job the discriminant analysis did.

There is a subtlety here. n has changed its meaning in the middle of this calculation! In tab, n is counting the number of obesity observed and predicted combinations, but now it is counting the number of men classified correctly and incorrectly. The wt=n uses the first n, but the mutate line uses the new n, the result of the count line here. (I think count used to use nn for the result of the second count, so that you could tell them apart, but it no longer seems to do so.)

I said above that the obesity groups were not equally easy to predict. A small modification of the above will get the misclassification rates by (true) obesity group. This is done by putting an appropriate group_by in at the front, before we do any summarizing:

tab %>%

group_by(obesity) %>%

count(correct = (obesity == class), wt = n) %>%

mutate(proportion = n / sum(n))This gives the proportion wrong and correct for each (true) obesity group. I’m going to do the one more cosmetic thing to make it easier to read, a kind of “untidying”:

tab %>%

group_by(obesity) %>%

count(correct = (obesity == class), wt = n) %>%

mutate(proportion = n / sum(n)) %>%

select(-n) %>%

pivot_wider(names_from=correct, values_from=proportion)Looking down the TRUE column, groups A, B and D were gotten about 60% correct (and 40% wrong), but group C is much worse. The overall misclassification rate is made bigger by the fact that C is so hard to predict.

Find out for yourself what happens if I fail to remove the n column before doing the pivot_wider.

A slightly more elegant look is obtained this way, by making nicer values than TRUE and FALSE:

tab %>%

group_by(obesity) %>%

mutate(prediction_stat = ifelse(obesity == class, "correct", "wrong")) %>%

count(prediction_stat, wt = n) %>%

mutate(proportion = n / sum(n)) %>%

select(-n) %>%

pivot_wider(names_from=prediction_stat, values_from=proportion)\(\blacksquare\)

Solution

On the plot of (here), we said that there was a lot of scatter, but that groups a, b and d tended to be found at the top, right and left respectively of the plot. That suggests that these three groups should be somewhat predictable. The c’s, on the other hand, were all over the place on the plot, and were mostly predicted wrong.

The idea is that the stories you pull from the plot and the predictions should be more or less consistent. There are several ways you might say that: another approach is to say that the observations are all over the place on the plot, and the predictions are all bad. This is not as insightful as my comments above, but if that’s what the plot told you, that’s what the predictions would seem to be saying as well. (Or even, the predictions are not so bad compared to the apparently random pattern on the plot, if that’s what you saw. There are different ways to say something more or less sensible.)

\(\blacksquare\)

35.10 Understanding a MANOVA

One use of discriminant analysis is to understand the results of a MANOVA. This question is a followup to a previous MANOVA that we did, the one with two variables y1 and y2 and three groups a through c. The data were in link.

- Read the data in again and run the MANOVA that you did before.

Solution

This is an exact repeat of what you did before:

my_url <- "http://ritsokiguess.site/datafiles/simple-manova.txt"

simple <- read_delim(my_url, " ")Rows: 12 Columns: 3

── Column specification ────────────────────────────────────────────────────────

Delimiter: " "

chr (1): group

dbl (2): y1, y2

ℹ Use `spec()` to retrieve the full column specification for this data.

ℹ Specify the column types or set `show_col_types = FALSE` to quiet this message.simpleresponse <- with(simple, cbind(y1, y2))

simple.3 <- manova(response ~ group, data = simple)

summary(simple.3) Df Pillai approx F num Df den Df Pr(>F)

group 2 1.3534 9.4196 4 18 0.0002735 ***

Residuals 9

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1This P-value is small, so there is some way in which some of the groups differ on some of the variables.4

We should check that we believe this, using Box’s M test:5

summary(BoxM(response, simple$group)) Box's M Test

Chi-Squared Value = 3.517357 , df = 6 and p-value: 0.742 There is no problem here: no evidence that any of the response variables differ in spread across the groups.

\(\blacksquare\)

- Run a discriminant analysis “predicting” group from the two response variables. Display the output.

Solution

This:

simple.4 <- lda(group ~ y1 + y2, data = simple)

simple.4Call:

lda(group ~ y1 + y2, data = simple)

Prior probabilities of groups:

a b c

0.3333333 0.2500000 0.4166667

Group means:

y1 y2

a 3.000000 4.0

b 4.666667 7.0

c 8.200000 6.4

Coefficients of linear discriminants:

LD1 LD2

y1 0.7193766 0.4060972

y2 0.3611104 -0.9319337

Proportion of trace:

LD1 LD2

0.8331 0.1669 Note that this is the other way around from MANOVA: here, we are “predicting the group” from the response variables, in the same manner as one of the flavours of logistic regression: “what makes the groups different, in terms of those response variables?”.

\(\blacksquare\)

- * In the output from the discriminant analysis, why are there exactly two linear discriminants

LD1andLD2?

Solution

There are two linear discriminants because there are 3 groups and two variables, so there are the smaller of \(3-1\) and 2 discriminants.

\(\blacksquare\)

- * From the output, how would you say that the first linear discriminant

LD1compares in importance to the second oneLD2: much more important, more important, equally important, less important, much less important? Explain briefly.

Solution

Look at the Proportion of trace at the bottom of the output. The first number is much bigger than the second, so the first linear discriminant is much more important than the second. (I care about your reason; you can say it’s “more important” rather than “much more important” and I’m good with that.)

\(\blacksquare\)

- Obtain a plot of the discriminant scores.

Solution

This was the old-fashioned way:

plot(simple.4)

It needs cajoling to produce colours, but we can do better. The first thing is to obtain the predictions:

simple.pred <- predict(simple.4)Then we make a data frame out of the discriminant scores and the true groups, using cbind:

d <- cbind(simple, simple.pred)

head(d)or like this, for fun:6

ld <- as_tibble(simple.pred$x)

post <- as_tibble(simple.pred$posterior)

dd <- bind_cols(simple, class = simple.pred$class, ld, post)

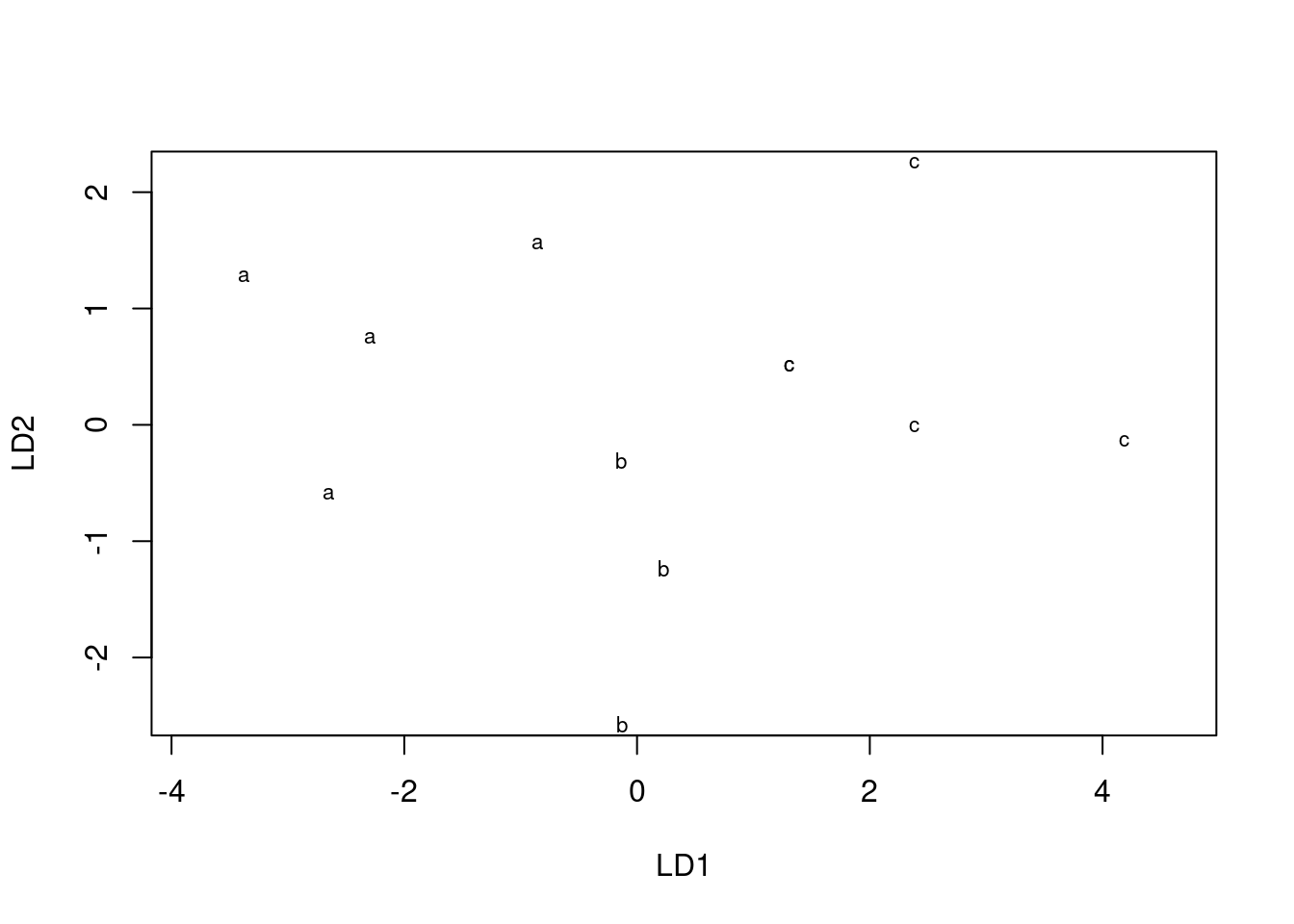

ddAfter that, we plot the first one against the second one, colouring by true groups:

ggplot(d, aes(x = x.LD1, y = x.LD2, colour = group)) + geom_point()

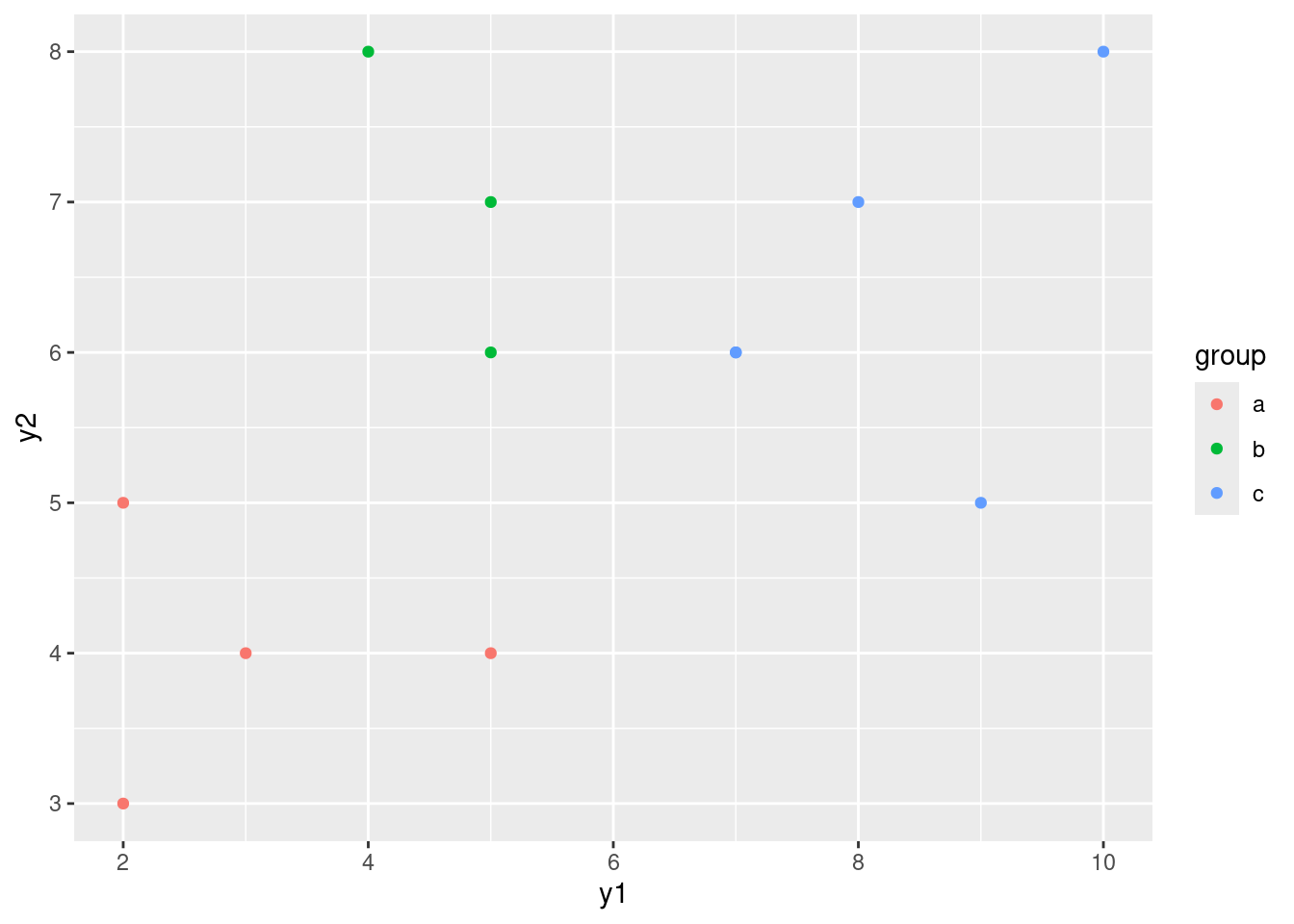

I wanted to compare this plot with the original plot of y1 vs. y2, coloured by groups:

ggplot(simple, aes(x = y1, y = y2, colour = group)) + geom_point()

The difference between this plot and the one of LD1 vs.

LD2 is that things have been rotated a bit so that most of the separation of groups is done by LD1. This is reflected in the fact that LD1 is quite a bit more important than LD2: the latter doesn’t help much in separating the groups.

With that in mind, we could also plot just LD1, presumably against groups via boxplot:

ggplot(d, aes(x = group, y = x.LD1)) + geom_boxplot()

This shows that LD1 does a pretty fine job of separating the groups, and LD2 doesn’t really have much to add to the picture.

\(\blacksquare\)

- Describe briefly how

LD1and/orLD2separate the groups. Does your picture confirm the relative importance ofLD1andLD2that you found back in part (here)? Explain briefly.

Solution

LD1 separates the groups left to right: group a is low on LD1, b is in the middle and c is high on LD1. (There is no intermingling of the groups on LD1, so it separates the groups perfectly.)

As for LD2, all it does (possibly) is to distinguish b (low) from a and c (high). Or you can, just as reasonably, take the view that it doesn’t really separate any of the groups.

Back in part (here), you said (I hope) that LD1 was (very) important compared to LD2. This shows up here in that LD1 does a very good job of distinguishing the groups, while LD2 does a poor to non-existent job of separating any groups. (If you didn’t say that before, here is an invitation to reconsider what you did say there.)

\(\blacksquare\)

- What makes group

ahave a low score onLD1? There are two steps that you need to make: consider the means of groupaon variablesy1andy2and how they compare to the other groups, and consider howy1andy2play into the score onLD1.

Solution

The information you need is in the big output.

The means of y1 and y2 for group a are 3 and 4 respectively, which are the lowest of all the groups. That’s the first thing.

The second thing is the coefficients of LD1 in terms of y1 and y2, which are both positive. That means, for any observation, if its y1 and y2 values are large, that observation’s score on LD1 will be large as well. Conversely, if its values are small, as the ones in group a are, its score on LD1 will be small.

You need these two things.

This explains why the group a observations are on the left of the plot. It also explains why the group c observations are on the right: they are large on both y1 and y2, and so large on LD1.

What about LD2? This is a little more confusing (and thus I didn’t ask you about that). Its “coefficients of linear discriminant” are positive on y1 and negative on y2, with the latter being bigger in size. Group b is about average on y1 and distinctly high on y2; the second of these coupled with the negative coefficient on y2 means that the LD2 score for observations in group b will be negative.

For LD2, group a has a low mean on both variables and group c has a high mean, so for both groups there is a kind of cancelling-out happening, and neither group a nor group c will be especially remarkable on LD2.

\(\blacksquare\)

- Obtain predictions for the group memberships of each observation, and make a table of the actual group memberships against the predicted ones. How many of the observations were wrongly classified?

Solution

Use the simple.pred that you got earlier. This is the table way:

with(d, table(obs = group, pred = class)) pred

obs a b c

a 4 0 0

b 0 3 0

c 0 0 5Every single one of the 12 observations has been classified into its correct group. (There is nothing off the diagonal of this table.) The alternative to table is the tidyverse way:

d %>% count(group, class)or

d %>%

count(group, class) %>%

pivot_wider(names_from=class, values_from=n, values_fill = list(n=0))if you want something that looks like a frequency table. All the as got classified as a, and so on. That’s the end of what I asked you to do, but as ever I wanted to press on. The next question to ask after getting the predicted groups is “what are the posterior probabilities of being in each group for each observation”: that is, not just which group do I think it belongs in, but how sure am I about that call? The posterior probabilities in my d start with posterior. These have a ton of decimal places which I like to round off first before I display them, eg. to 3 decimals here:

d %>%

select(y1, y2, group, class, starts_with("posterior")) %>%

mutate(across(starts_with("posterior"), \(post) round(post, 3)))You see that the posterior probability of an observation being in the group it actually was in is close to 1 all the way down. The only one with any doubt at all is observation #6, which is actually in group b, but has “only” probability 0.814 of being a b based on its y1 and y2 values. What else could it be? Well, it’s about equally split between being a and c. Let me see if I can display this observation on the plot in a different way. First I need to make a new column picking out observation 6, and then I use this new variable as the shape of the point I plot:

simple %>%

mutate(is6 = (row_number() == 6)) %>%

ggplot(aes(x = y1, y = y2, colour = group, shape = is6)) +

geom_point(size = 3)

That makes it stand out a bit: if you look carefully, one of the green points (observation 6) is plotted as a triangle rather than a circle, as the legend for is6 indicates. (I plotted all the points bigger to make this easier to see.)

Since observation #6 is in group b, it appears as a green triangle. What makes it least like a b? Well, it has the smallest y2 value of any of the b’s (which makes it most like an a of any of the b’s), and it has the largest y1 value (which makes it most like a c of any of the b’s). But still, it’s nearer the greens than anything else, so it’s still more like a b than it is like any of the other groups.

\(\blacksquare\)

35.11 What distinguishes people who do different jobs?

244 people work at a certain company. They each have one of three jobs: customer service, mechanic, dispatcher. In the data set, these are labelled 1, 2 and 3 respectively. In addition, they each are rated on scales called outdoor, social and conservative. Do people with different jobs tend to have different scores on these scales, or, to put it another way, if you knew a person’s scores on outdoor, social and conservative, could you say something about what kind of job they were likely to hold? The data are in link.

- Read in the data and display some of it.

Solution

The usual. This one is aligned columns. I’m using a “temporary” name for my read-in data frame, since I’m going to create the proper one in a moment.

my_url <- "http://ritsokiguess.site/datafiles/jobs.txt"

jobs0 <- read_table(my_url)Warning: Missing column names filled in: 'X6' [6]

── Column specification ────────────────────────────────────────────────────────

cols(

outdoor = col_double(),

social = col_double(),

conservative = col_double(),

job = col_double(),

id = col_double(),

X6 = col_character()

)Warning: 244 parsing failures.

row col expected actual file

1 -- 6 columns 5 columns 'http://ritsokiguess.site/datafiles/jobs.txt'

2 -- 6 columns 5 columns 'http://ritsokiguess.site/datafiles/jobs.txt'

3 -- 6 columns 5 columns 'http://ritsokiguess.site/datafiles/jobs.txt'

4 -- 6 columns 5 columns 'http://ritsokiguess.site/datafiles/jobs.txt'

5 -- 6 columns 5 columns 'http://ritsokiguess.site/datafiles/jobs.txt'

... ... ......... ......... .............................................

See problems(...) for more details.jobs0We got all that was promised, plus a label id for each employee, which we will from here on ignore.7

\(\blacksquare\)

- Note the types of each of the variables, and create any new variables that you need to.

Solution

These are all int or whole numbers. But, the job ought to be a factor: the labels 1, 2 and 3 have no meaning as such, they just label the three different jobs. (I gave you a hint of this above.) So we need to turn job into a factor. I think the best way to do that is via mutate, and then we save the new data frame into one called jobs that we actually use for the analysis below:

job_labels <- c("custserv", "mechanic", "dispatcher")

jobs0 %>%

mutate(job = factor(job, labels = job_labels)) -> jobsI lived on the edge and saved my factor job into a variable with the same name as the numeric one. I should check that I now have the right thing:

jobsI like this better because you see the actual factor levels rather than the underlying numeric values by which they are stored.

All is good here. If you forget the labels thing, you’ll get a factor, but its levels will be 1, 2, and 3, and you will have to remember which jobs they go with. I’m a fan of giving factors named levels, so that you can remember what stands for what.8

Extra: another way of doing this is to make a lookup table, that is, a little table that shows which job goes with which number:

lookup_tab <- tribble(

~job, ~jobname,

1, "custserv",

2, "mechanic",

3, "dispatcher"

)

lookup_tabI carefully put the numbers in a column called job because I want to match these with the column called job in jobs0:

jobs0 %>%

left_join(lookup_tab) -> jobsJoining with `by = join_by(job)`jobs %>% slice_sample(n = 20)You see that each row has the name of the job that employee has, in the column jobname, because the job id was looked up in our lookup table. (I displayed some random rows so you could see that it worked.)

\(\blacksquare\)

- Run a multivariate analysis of variance to convince yourself that there are some differences in scale scores among the jobs.

Solution

You know how to do this, right? This one is the easy way:

response <- with(jobs, cbind(social, outdoor, conservative))

response.1 <- manova(response ~ jobname, data = jobs)

summary(response.1) Df Pillai approx F num Df den Df Pr(>F)

jobname 2 0.76207 49.248 6 480 < 2.2e-16 ***

Residuals 241

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1Or you can use Manova. That is mostly for practice here, since there is no reason to make things difficult for yourself:

library(car)

response.2 <- lm(response ~ job, data = jobs)

summary(Manova(response.2))

Type II MANOVA Tests:

Sum of squares and products for error:

social outdoor conservative

social 4503.0829 669.0553 161.5635

outdoor 669.0553 5236.1506 -190.3804

conservative 161.5635 -190.3804 2739.6832

------------------------------------------

Term: job

Sum of squares and products for the hypothesis:

social outdoor conservative

social 2792.339 -1128.5471 -1331.9406

outdoor -1128.547 456.1116 538.3148

conservative -1331.941 538.3148 635.3331

Multivariate Tests: job

Df test stat approx F num Df den Df Pr(>F)

Pillai 1 0.519350 86.44129 3 240 < 2.22e-16 ***

Wilks 1 0.480650 86.44129 3 240 < 2.22e-16 ***

Hotelling-Lawley 1 1.080516 86.44129 3 240 < 2.22e-16 ***

Roy 1 1.080516 86.44129 3 240 < 2.22e-16 ***

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1This version gives the four different versions of the test (rather than just the Pillai test that manova gives), but the results are in this case identical for all of them.

So: oh yes, there are differences (on some or all of the variables, for some or all of the groups). So we need something like discriminant analysis to understand the differences.

We really ought to follow this up with Box’s M test, to be sure that the variances and correlations for each variable are equal enough across the groups, but we note off the top that the P-values (all of them) are really small, so there ought not to be much doubt about the conclusion anyway:

summary(BoxM(response, jobs$job)) Box's M Test

Chi-Squared Value = 25.64176 , df = 12 and p-value: 0.0121 This is small, but not (for this test) small enough to worry about (it’s not less than 0.001).

This, and the lda below, actually works perfectly well if you use the original (integer) job, but then you have to remember which job number is which.

\(\blacksquare\)

- Run a discriminant analysis and display the output.

Solution

Now jobname is the “response”:

job.1 <- lda(jobname ~ social + outdoor + conservative, data = jobs)

job.1Call:

lda(jobname ~ social + outdoor + conservative, data = jobs)

Prior probabilities of groups:

custserv dispatcher mechanic

0.3483607 0.2704918 0.3811475

Group means:

social outdoor conservative

custserv 24.22353 12.51765 9.023529

dispatcher 15.45455 15.57576 13.242424

mechanic 21.13978 18.53763 10.139785

Coefficients of linear discriminants:

LD1 LD2

social 0.19427415 -0.04978105

outdoor -0.09198065 -0.22501431

conservative -0.15499199 0.08734288

Proportion of trace:

LD1 LD2

0.7712 0.2288 \(\blacksquare\)

- Which is the more important,

LD1orLD2? How much more important? Justify your answer briefly.

Solution

Look at the “proportion of trace” at the bottom. The value for LD1 is quite a bit higher, so LD1 is quite a bit more important when it comes to separating the groups. LD2 is, as I said, less important, but is not completely worthless, so it will be worth taking a look at it.

\(\blacksquare\)

- Describe what values for an individual on the scales will make each of

LD1andLD2high.

Solution

This is a two-parter: decide whether each scale makes a positive, negative or zero contribution to the linear discriminant (looking at the “coefficients of linear discriminants”), and then translate that into what would make each LD high. Let’s start with LD1:

Its coefficients on the three scales are respectively negative (\(-0.19\)), zero (0.09; my call) and positive (0.15). Where you draw the line is up to you: if you want to say that outdoor’s contribution is positive, go ahead. This means that LD1 will be high if social is low and if conservative is high. (If you thought that outdoor’s coefficient was positive rather than zero, if outdoor is high as well.)

Now for LD2: I’m going to call outdoor’s coefficient of \(-0.22\) negative and the other two zero, so that LD2 is high if outdoor is low. Again, if you made a different judgement call, adapt your answer accordingly.

\(\blacksquare\)

- The first group of employees, customer service, have the highest mean on

socialand the lowest mean on both of the other two scales. Would you expect the customer service employees to score high or low onLD1? What aboutLD2?

Solution

In the light of what we said in the previous part, the customer service employees, who are high on social and low on conservative, should be low (negative) on LD1, since both of these means are pointing that way. As I called it, the only thing that matters to LD2 is outdoor, which is low for the customer service employees, and thus LD2 for them will be high (negative coefficient).

\(\blacksquare\)

- Plot your discriminant scores (which you will have to obtain first), and see if you were right about the customer service employees in terms of

LD1andLD2. The job names are rather long, and there are a lot of individuals, so it is probably best to plot the scores as coloured circles with a legend saying which colour goes with which job (rather than labelling each individual with the job they have).

Solution

Predictions first, then make a data frame combining the predictions with the original data:

p <- predict(job.1)

as.data.frame(p)d <- cbind(jobs, p)

dFollowing my suggestion, plot these the standard way with colour distinguishing the jobs:

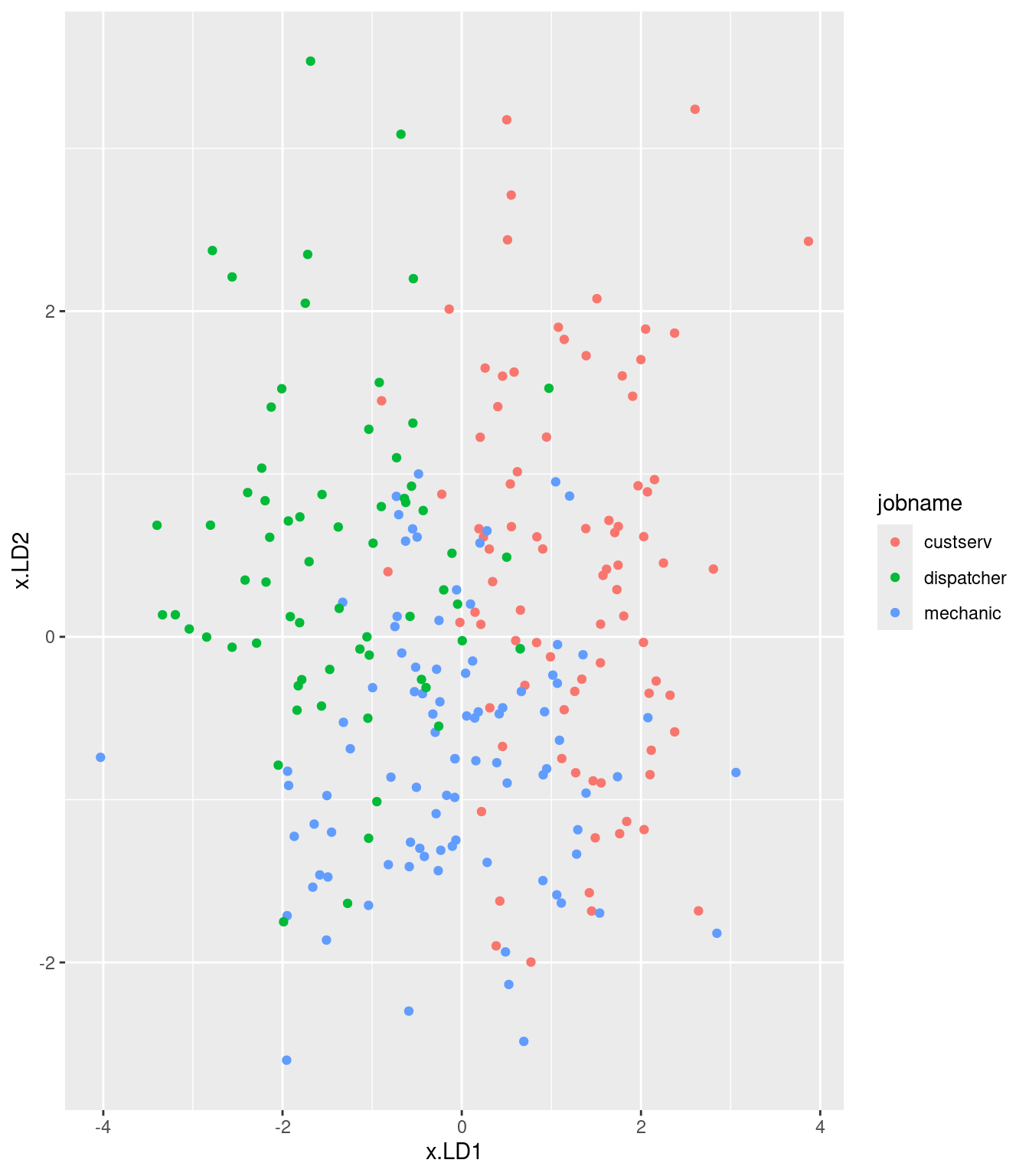

ggplot(d, aes(x = x.LD1, y = x.LD2, colour = jobname)) + geom_point()

# ggplot(d, aes(x = x.LD1, y = x.LD2, colour = class)) + geom_point()

# ggplot(d, aes(x = job, y = x.LD1)) + geom_boxplot()I was mostly right about the customer service people: small LD1 definitely, large LD2 kinda. I wasn’t more right because the group means don’t tell the whole story: evidently, the customer service people vary quite a bit on outdoor, so the red dots are all over the left side of the plot.

There is quite a bit of intermingling of the three employee groups on the plot, but the point of the MANOVA is that the groups are (way) more separated than you’d expect by chance, that is if the employees were just randomly scattered across the plot.

To think back to that trace thing: here, it seems that LD1 mainly separates customer service (left) from dispatchers (right); the mechanics are all over the place on LD1, but they tend to be low on LD2. So LD2 does have something to say.

\(\blacksquare\)

- * Obtain predicted job allocations for each individual (based on their scores on the three scales), and tabulate the true jobs against the predicted jobs. How would you describe the quality of the classification? Is that in line with what the plot would suggest?

Solution

Use the predictions that you got before and saved in d:

with(d, table(obs = jobname, pred = class)) pred

obs custserv dispatcher mechanic

custserv 68 4 13

dispatcher 3 50 13

mechanic 16 10 67Or, the tidyverse way:

d %>% count(job, class)or:

d %>%

count(job, class) %>%

pivot_wider(names_from=class, values_from=n, values_fill = list(n=0))I didn’t really need the values_fill since there are no missing frequencies, but I’ve gotten used to putting it in. There are a lot of misclassifications, but there are a lot of people, so a large fraction of people actually got classified correctly. The biggest frequencies are of people who got classified correctly. I think this is about what I was expecting, looking at the plot: the people top left are obviously customer service, the ones top right are in dispatch, and most of the ones at the bottom are mechanics. So there will be some errors, but the majority of people should be gotten right. The easiest pairing to get confused is customer service and mechanics, which you might guess from the plot: those customer service people with a middling LD1 score and a low LD2 score (that is, high on outdoor) could easily be confused with the mechanics. The easiest pairing to distinguish is customer service and dispatchers: on the plot, left and right, that is, low and high respectively on LD1.

d %>%

filter(jobname != class)What fraction of people actually got misclassified? You could just pull out the numbers and add them up, but you know me: I’m too lazy to do that.

We can work out the total number and fraction who got misclassified. There are different ways you might do this, but the tidyverse way provides the easiest starting point. For example, we can make a new column that indicates whether a group is the correct or wrong classification:

d %>%

count(job, class) %>%

mutate(job_stat = ifelse(job == class, "correct", "wrong"))From there, we count up the correct and wrong ones, recognizing that we want to total up the frequencies in n, not just count the number of rows:

d %>%

count(job, class) %>%

mutate(job_stat = ifelse(job == class, "correct", "wrong")) %>%

count(job_stat, wt = n)and turn these into proportions:

d %>%

count(job, class) %>%

mutate(job_stat = ifelse(job == class, "correct", "wrong")) %>%

count(job_stat, wt = n) %>%

mutate(proportion = n / sum(n))There is a count followed by another count of the first lot of counts, so the second count column has taken over the name n.

24% of all the employees got classified into the wrong job, based on their scores on outdoor, social and conservative.

This is actually not bad, from one point of view: if you just guessed which job each person did, without looking at their scores on the scales at all, you would get \({1\over 3}=33\%\) of them right, just by luck, and \({2\over3}=67\%\) of them wrong. From 67% to 24% error is a big improvement, and that is what the MANOVA is reacting to.

To figure out whether some of the groups were harder to classify than others, squeeze a group_by in early to do the counts and proportions for each (true) job:

d %>%

count(job, class) %>%

mutate(job_stat = ifelse(job == class, "correct", "wrong")) %>%

group_by(job) %>%

count(job_stat, wt = n) %>%

mutate(proportion = n / sum(n))or even split out the correct and wrong ones into their own columns:

d %>%

count(job, class) %>%

mutate(job_stat = ifelse(job == class, "correct", "wrong")) %>%

group_by(job) %>%

count(job_stat, wt = n) %>%

mutate(proportion = n / sum(n)) %>%

select(-n) %>%

pivot_wider(names_from=job_stat, values_from=proportion)The mechanics were hardest to get right and easiest to get wrong, though there isn’t much in it. I think the reason is that the mechanics were sort of “in the middle” in that a mechanic could be mistaken for either a dispatcher or a customer service representative, but but customer service and dispatchers were more or less distinct from each other.

It’s up to you whether you prefer to do this kind of thing by learning enough about table to get it to work, or whether you want to use tidy-data mechanisms to do it in a larger number of smaller steps. I immediately thought of table because I knew about it, but the tidy-data way is more consistent with the way we have been doing things.

\(\blacksquare\)

- Consider an employee with these scores: 20 on

outdoor, 17 onsocialand 8 onconservativeWhat job do you think they do, and how certain are you about that? Usepredict, first making a data frame out of the values to predict for.

Solution

This is in fact exactly the same idea as the data frame that I generally called new when doing predictions for other models. I think the clearest way to make one of these is with tribble:

new <- tribble(

~outdoor, ~social, ~conservative,

20, 17, 8

)

newThere’s no need for datagrid or crossing here because I’m not doing combinations of things. (I might have done that here, to get a sense for example of “what effect does a higher score on outdoor have on the likelihood of a person doing each job?”. But I didn’t.

Then feed this into predict as the second thing:

pp1 <- predict(job.1, new)Our predictions are these:

cbind(new, pp1)The class thing gives our predicted job, and the posterior probabilities say how sure we are about that. So we reckon there’s a 78% chance that this person is a mechanic; they might be a dispatcher but they are unlikely to be in customer service. Our best guess is that they are a mechanic.9

Does this pass the sanity-check test? First figure out where our new employee stands compared to the others:

summary(jobs) outdoor social conservative job

Min. : 0.00 Min. : 7.00 Min. : 0.00 Min. :1.000

1st Qu.:13.00 1st Qu.:17.00 1st Qu.: 8.00 1st Qu.:1.000

Median :16.00 Median :21.00 Median :11.00 Median :2.000

Mean :15.64 Mean :20.68 Mean :10.59 Mean :1.922

3rd Qu.:19.00 3rd Qu.:25.00 3rd Qu.:13.00 3rd Qu.:3.000

Max. :28.00 Max. :35.00 Max. :20.00 Max. :3.000

id X6 jobname

Min. : 1.00 Length:244 Length:244

1st Qu.:21.00 Class :character Class :character

Median :41.00 Mode :character Mode :character

Mean :41.95

3rd Qu.:61.25

Max. :93.00 Their score on outdoor is above average, but their scores on the other two scales are below average (right on the 1st quartile in each case).

Go back to the table of means from the discriminant analysis output. The mechanics have the highest average for outdoor, they’re in the middle on social and they are lowish on conservative. Our new employee is at least somewhat like that.

Or, we can figure out where our new employee sits on the plot. The output from predict gives the predicted LD1 and LD2, which are 0.71 and \(-1.02\) respectively. This employee would sit to the right of and below the middle of the plot: in the greens, but with a few blues nearby: most likely a mechanic, possibly a dispatcher, but likely not customer service, as the posterior probabilities suggest.

Extra: I can use the same mechanism to predict for a combination of values. This would allow for the variability of each of the original variables to differ, and enable us to assess the effect of, say, a change in conservative over its “typical range”, which we found out above with summary(jobs). I’ll take the quartiles, in my usual fashion:

outdoors <- c(13, 19)

socials <- c(17, 25)

conservatives <- c(8, 13)The IQRs are not that different, which says that what we get here will not be that different from the ``coefficients of linear discriminants’’ above:

new <- crossing(

outdoor = outdoors, social = socials,

conservative = conservatives

)

pp2 <- predict(job.1, new)

px <- round(pp2$x, 2)

cbind(new, pp2$class, px)The highest (most positive) LD1 score goes with high outdoor, low social, high conservative (and being a dispatcher). It is often interesting to look at the second-highest one as well: here that is low outdoor, and the same low social and high conservative as before. That means that outdoor has nothing much to do with LD1 score. Being low social is strongly associated with LD1 being positive, so that’s the important part of LD1.

What about LD2? The most positive LD2 are these:

LD2 outdoor social conservative

====================================

0.99 low low high

0.59 low high high

0.55 low low lowThese most consistently go with outdoor being low.

Is that consistent with the “coefficients of linear discriminants”?

job.1$scaling LD1 LD2

social 0.19427415 -0.04978105

outdoor -0.09198065 -0.22501431

conservative -0.15499199 0.08734288Very much so: outdoor has nothing much to do with LD1 and everything to do with LD2.

\(\blacksquare\)

35.12 Observing children with ADHD

A number of children with ADHD were observed by their mother or their father (only one parent observed each child). Each parent was asked to rate occurrences of behaviours of four different types, labelled q1 through q4 in the data set. Also recorded was the identity of the parent doing the observation for each child: 1 is father, 2 is mother.

Can we tell (without looking at the parent column) which parent is doing the observation? Research suggests that rating the degree of impairment in different categories depends on who is doing the rating: for example, mothers may feel that a child has difficulty sitting still, while fathers, who might do more observing of a child at play, might think of such a child as simply being “active” or “just being a kid”. The data are in link.

- Read in the data and confirm that you have four ratings and a column labelling the parent who made each observation.

Solution

As ever:

my_url <- "http://ritsokiguess.site/datafiles/adhd-parents.txt"

adhd <- read_delim(my_url, " ")Rows: 29 Columns: 5

── Column specification ────────────────────────────────────────────────────────

Delimiter: " "

chr (1): parent

dbl (4): q1, q2, q3, q4

ℹ Use `spec()` to retrieve the full column specification for this data.

ℹ Specify the column types or set `show_col_types = FALSE` to quiet this message.adhdYes, exactly that.

\(\blacksquare\)

- Run a suitable discriminant analysis and display the output.

Solution

This is as before:

adhd.1 <- lda(parent ~ q1 + q2 + q3 + q4, data = adhd)

adhd.1Call:

lda(parent ~ q1 + q2 + q3 + q4, data = adhd)

Prior probabilities of groups:

father mother

0.1724138 0.8275862

Group means:

q1 q2 q3 q4

father 1.800 1.000000 1.800000 1.800

mother 2.375 2.791667 1.958333 1.625

Coefficients of linear discriminants:

LD1

q1 -0.3223454

q2 2.3219448

q3 0.1411360

q4 0.1884613\(\blacksquare\)

- Which behaviour item or items seem to be most helpful at distinguishing the parent making the observations? Explain briefly.

Solution

Look at the Coefficients of Linear Discriminants. The coefficient of q2, 2.32, is much larger in size than the others, so it’s really q2 that distinguishes mothers and fathers. Note also that the group means for fathers and mothers are fairly close on all the items except for q2, which are a long way apart. So that’s another hint that it might be q2 that makes the difference. But that might be deceiving: one of the other qs, even though the means are close for mothers and fathers, might actually do a good job of distinguishing mothers from fathers, because it has a small SD overall.

\(\blacksquare\)

- Obtain the predictions from the

lda, and make a suitable plot of the discriminant scores, bearing in mind that you only have oneLD. Do you think there will be any misclassifications? Explain briefly.

Solution

The prediction is the obvious thing. I take a quick look at it (using glimpse), but only because I feel like it:

adhd.2 <- predict(adhd.1)

glimpse(adhd.2)List of 3

$ class : Factor w/ 2 levels "father","mother": 1 2 1 2 2 2 2 2 2 2 ...

$ posterior: num [1:29, 1:2] 9.98e-01 5.57e-06 9.98e-01 4.97e-02 4.10e-05 ...

..- attr(*, "dimnames")=List of 2

.. ..$ : chr [1:29] "1" "2" "3" "4" ...

.. ..$ : chr [1:2] "father" "mother"

$ x : num [1:29, 1] -3.327 1.357 -3.327 -0.95 0.854 ...

..- attr(*, "dimnames")=List of 2

.. ..$ : chr [1:29] "1" "2" "3" "4" ...

.. ..$ : chr "LD1"The discriminant scores are in the thing called x in there. There is only LD1 (only two groups, mothers and fathers), so the right way to plot it is against the true groups, eg. by a boxplot, first making a data frame, using data.frame, containing what you need:

d <- cbind(adhd, adhd.2)

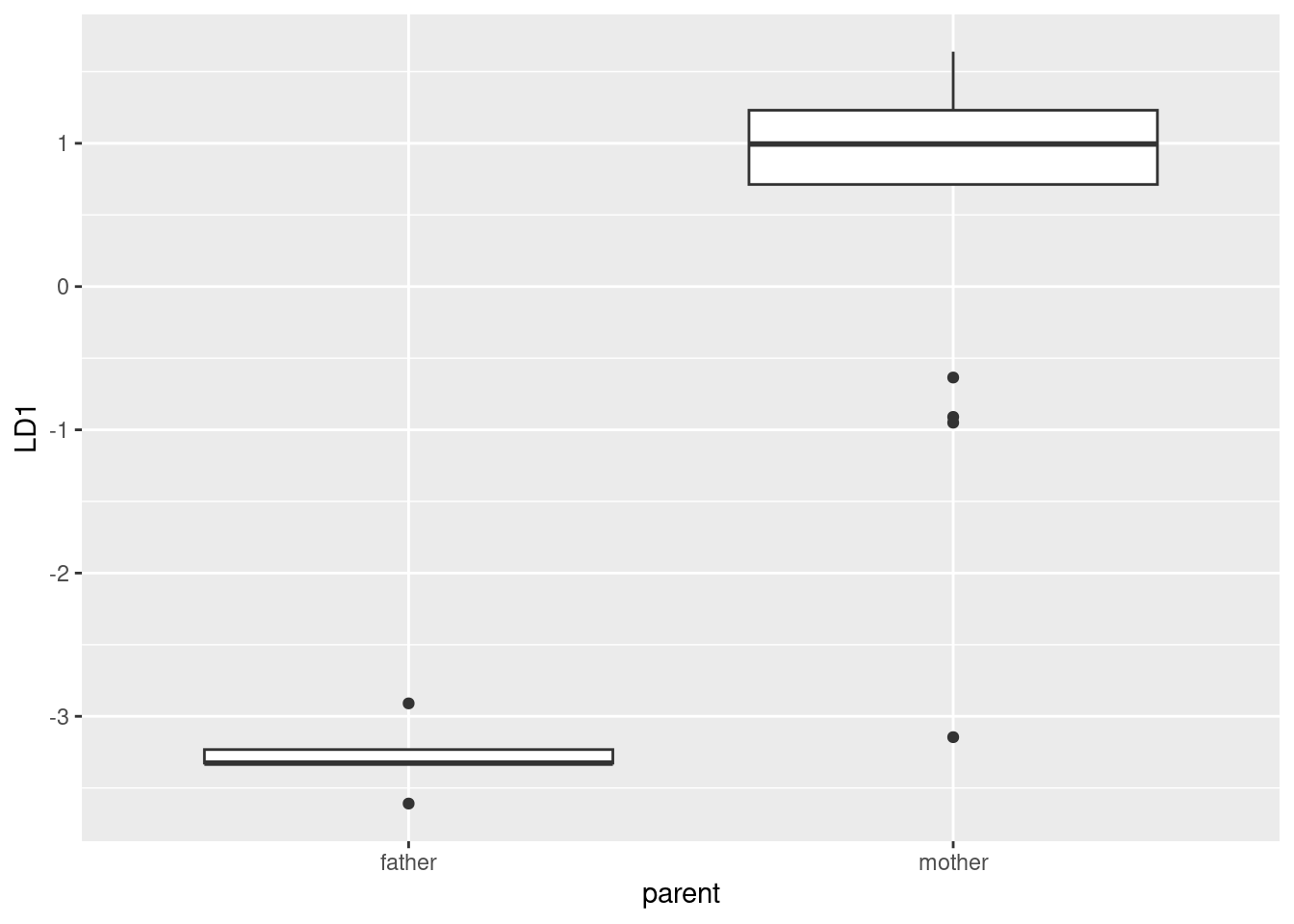

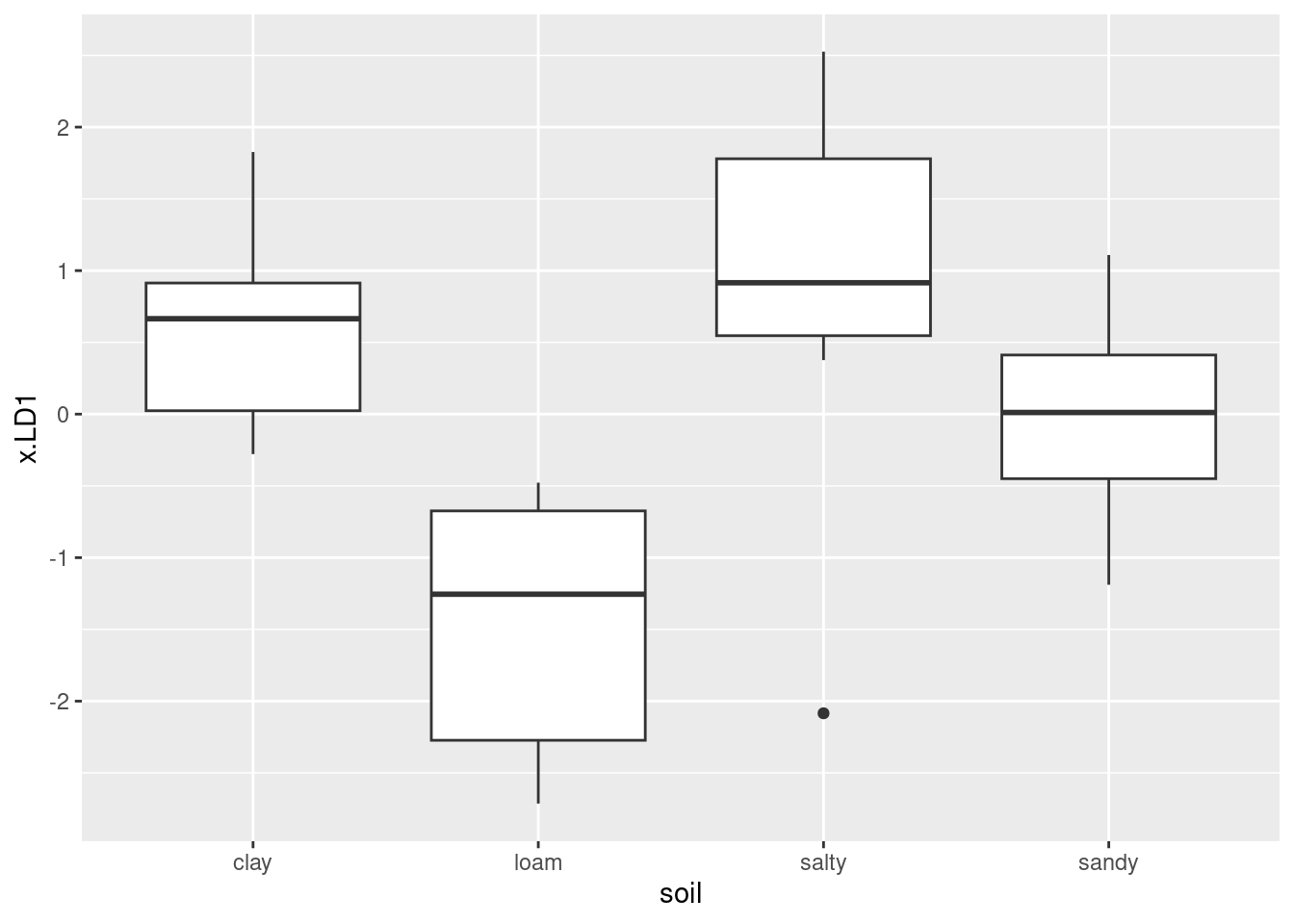

head(d)ggplot(d, aes(x = parent, y = LD1)) + geom_boxplot()

The fathers look to be a very compact group with LD1 score around \(-3\), so I don’t foresee any problems there. The mothers, on the other hand, have outliers: there is one with LD1 score beyond \(-3\) that will certainly be mistaken for a father. There are a couple of other unusual LD1 scores among the mothers, but a rule like “anything above \(-2\) is called a mother, anything below is called a father” will get these two right. So I expect that the one very low mother will get misclassified, but that’s the only one.

\(\blacksquare\)

- Obtain the predicted group memberships and make a table of actual vs. predicted. Were there any misclassifications? Explain briefly.

Solution

Use the predictions from the previous part, and the observed parent values from the original data frame. Then use either table or tidyverse to summarize.

with(d, table(obs = parent, pred = class)) pred

obs father mother

father 5 0

mother 1 23Or,

d %>% count(parent, class)or

d %>%

count(parent, class) %>%

pivot_wider(names_from=class, values_from=n, values_fill = list(n=0))One of the mothers got classified as a father (evidently that one with a very negative LD1 score), but everything else is correct.

This time, by “explain briefly” I mean something like “tell me how you know there are or are not misclassifications”, or “describe any misclassifications that occur” or something like that.

Extra: I was curious — what is it about that one mother that caused her to get misclassified? (I didn’t ask you to think further about this, but in case you are curious as well.)

First, which mother was it? Let’s begin by adding the predicted classification to the data frame, and then we can query it by asking to see only the rows where the actual parent and the predicted parent were different. I’m also going to create a column id that will give us the row of the original data frame:

d %>%

mutate(id = row_number()) %>%